Introduction

Modern AI initiatives promise high returns — but poor data quality can torpedo them faster than you think. Without robust controls, biases creep in, models degrade, and stakeholders lose trust.

Here’s the truth: a data quality framework for AI is not a luxury — it’s a foundational requirement. When done right, it becomes a force multiplier for every AI project.

Find how through our data engineering solutions, your data becomes actionable, reliable, and ready to fuel your next phase of growth.

This guide explains what a data quality framework for AI is, its benefits, and how to build one step by step.

What is a data quality framework for AI?

A data quality framework for AI is a structured approach to validate, monitor, and improve data across machine learning pipelines using governance, automated checks, and quality metrics. It ensures accurate, consistent, and reliable data for training and inference, reducing model errors and improving AI outcomes.

TL;DR — What You’ll Learn In Guide on Data Quality Framework for AI

- Why AI initiatives fail when data quality is neglected

- The top 7 benefits of instituting a data quality framework

- A hypothetical ML pipeline scenario with quality challenges and resolution

- A checklist and roadmap to start building your own

- How to evolve your framework over time

- Strategic recommendations for leaders

- FAQs to clear up common doubts

In the first 10% of this post, here’s the focus: data quality framework for AI sets the stage for reliable, scalable, and governable AI outcomes.

Learn more about building a Data Management Roadmap

Why AI Demands Strong Data Quality

Before diving into benefits, let’s ground the argument: why does AI need more rigorous data quality than traditional analytics?

The AI Sensitivity to Garbage-In, Garbage-Out

Machine learning models are data-hungry. Their efficacy depends directly on the input distribution. If your training set, validation set, or production data contains missing values, outliers, mislabeled classes, or drift, your model’s predictions degrade quickly.

In contrast, BI dashboards or descriptive analytics can sometimes absorb “dirty” data, albeit with caveats. AI models are far less forgiving.

High Stakes, Amplified Risks

In regulated industries (healthcare, finance, manufacturing), errors propagate faster. A mislabeled medical data point or misaligned feature in loan underwriting can cause serious harm — legally, financially, or reputationally.

Explainability, Auditability & Trust

Stakeholders increasingly demand that AI be explainable and auditable. Without a structured mechanism to validate and document data quality, you can’t trace back errors or enforce accountability.

Scale & Automation

As organizations scale their AI footprint — more models, more domains, more real-time pipelines — you can’t rely on ad hoc checks. You need an architectural framework around data quality.

Data Quality Issues vs AI Impact (Quick Overview)

| Data Quality Issue | Impact on AI Models | Business Risk |

|---|---|---|

| Missing values | Incomplete feature learning | Inaccurate predictions |

| Duplicate records | Skewed model training | Poor customer insights |

| Inconsistent formats | Feature engineering errors | Pipeline failures |

| Outliers / anomalies | Model instability | Incorrect decisions |

| Data drift | Reduced model accuracy over time | Performance degradation |

| Incorrect labels | Biased predictions | Compliance and trust issues |

In short: AI intensifies every weakness in your data. A data quality framework for AI becomes the scaffolding that keeps your models stable, trustworthy, and efficient.

Explore Techment’s insights on Microsoft Fabric Architecture: CTO’s Guide to Modern Analytics & AI.

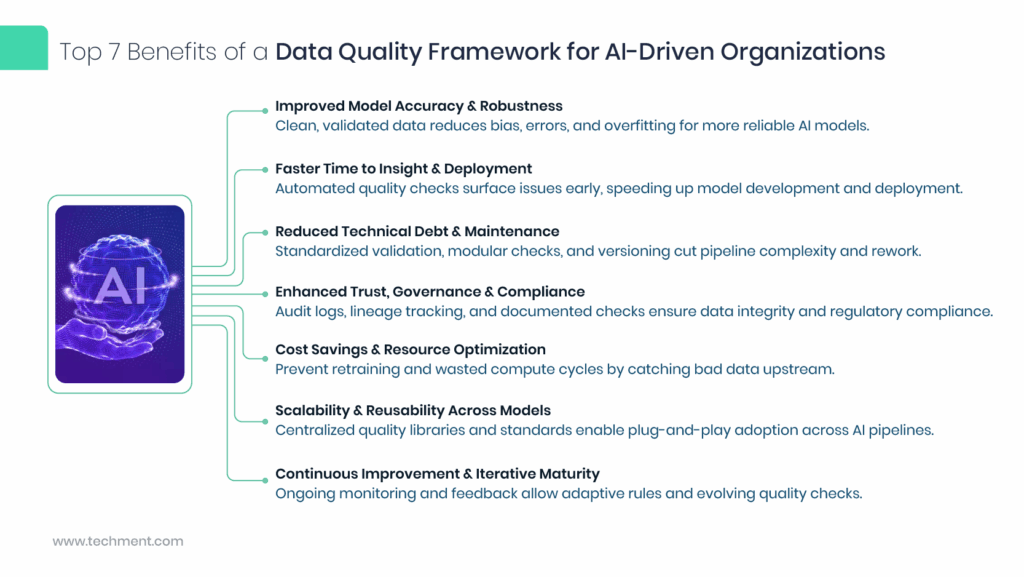

Top 7 Benefits of a Data Quality Framework for AI-Driven Organizations

Below, we explore seven key benefits — each directly tied to how AI projects behave in the real world. After each benefit, you’ll find strategic recommendations or illustrative pointers.

The top benefits of a data quality framework for AI include:

- Improved model accuracy and robustness

- Faster time to deployment and insights

- Reduced technical debt and maintenance

- Enhanced governance and compliance

- Cost savings and resource optimization

- Scalability across AI use cases

- Continuous improvement and monitoring

Learn how to build an AI-first data engineering blueprint and what leadership actions matter now in our latest blog.

Improved Model Accuracy & Robustness

Why it matters:

Cleaner training and inference data reduce noise and bias. The model sees fewer “spurious correlations” and overfits less often. When upstream data is validated, downstream performance improves.

How the framework helps:

- Enforce schema checks, data type validations, null thresholds

- Monitor feature drift, concept drift, distribution shifts

- Flag anomalies during ingestion and after transformations

Case in point:

In a customer-churn prediction pipeline, an outlier in billing data (e.g. a negative charge) might skew a numerical feature’s distribution. A proper data quality gate would detect and quarantine this. Over time, the model becomes more stable.

Strategic Tip: Integrate data-quality checks into your ML pipelines so that models are trained only on vetted data.

Faster Time to Insight & Deployment

Why it matters:

Data cleaning, debugging, and rework consume massive cycles in AI projects. Teams often spend 60–80% of their time wrangling data rather than modeling.

How the framework helps:

- Automate repetitive quality tasks

- Provide “clean baseline” data sets

- Surface errors earlier in the pipeline

By catching issues early (ingestion, transformations), remediation is easier. You don’t reach the model-build stage only to realize data leakage or missing features.

Strategic Tip: Enforce quality gates at each pipeline stage (raw ingestion, cleaning, features, output). Use a “fail-fast” philosophy.

Reduced Technical Debt & Maintenance Overheads

Why it matters:

Brittle pipelines, ad hoc fixes, and patchwork scripts are the hallmarks of accumulating tech debt. Over time, they break under pressure (new features, more data, scaling).

How the framework helps:

- Standardizes practices and templates

- Provides modular validation and monitoring libraries

- Enables versioning, lineage, rollback

When your framework is mature, adding a new model or domain becomes a configuration task, not a reinvention of data hygiene every time.

Strategic Tip: Begin small (one domain, one pipeline) and generalize your quality modules. Use orchestration tools (Airflow, Kubeflow, Apache Beam) and attach your quality validators as reusable tasks.

Enhanced Trust, Governance & Compliance

Why it matters:

In sectors with regulations (GDPR, HIPAA, banking standards), you need to show that your data inputs and outputs are auditable, controlled, and safe.

How the framework helps:

- Maintains historical logs of data checks, alerts, and fixes

- Enables lineage tracking (which data sources, transformations)

- Facilitates reporting to auditors or regulators

When stakeholders ask “how do you ensure the data was valid?”, you have documented evidence via the framework.

Strategic Tip: Use tools like Apache Atlas, OpenLineage, or proprietary governance systems to integrate your data quality framework with your enterprise data governance.

Cost Savings & Resource Optimization

Why it matters:

Bad data leads to rework, costly model retraining, wasted compute cycles, and poor ROI. For businesses paying for cloud storage and processing, these costs mount quickly.

How the framework helps:

- Prevents expensive retraining triggered by bad data

- Reduces false positives/negatives requiring human review

- Allows for prioritized remediation (i.e. focusing on critical data paths)

Over time, an upfront investment in quality reduces downstream waste.

Strategic Tip: Quantify the impact of data issues (e.g. false predictions, manual fixes) and use that to justify framework investment. Present cost-avoidance to leadership.

Scalability & Reusability Across Models

Why it matters:

Organizations rarely operate just one AI use case. You’ll have fraud detection, recommendation engines, churn modeling, NLP pipelines, etc. You want a “plug-and-play” quality infrastructure, not duplicative work.

How the framework helps:

- Provides a common quality library used by multiple teams

- Encourages consistency in standards, metrics, and alerts

- Enables central visibility across all AI pipelines

When each team doesn’t reinvent data hygiene, onboarding new models becomes faster, with fewer blind spots.

Strategic Tip: Establish a central data quality team or center of excellence. They evolve the core framework, while individual domains adopt and extend it.

Continuous Improvement & Iterative Maturity

Why it matters:

AI systems need to evolve over time. New data sources come in, expectations shift, business dynamics change. Without structured feedback, your framework degrades or becomes brittle.

How the framework helps:

- Monitors trends and flags declining data quality metrics

- Enables retrospective analysis: which data checks catch the most errors?

- Supports “guardrail expansion” gradually (e.g. expanding checks from schema to semantics)

In short: your framework becomes a learning engine that adapts.

Strategic Tip: Build dashboards on data quality metrics (e.g. failure rates, latency, drift), run quarterly reviews, and evolve thresholds. Use A/B tests to validate new checks.

ML Pipeline with Data Quality Challenges & Resolution

Let’s walk through an example pipeline — churn prediction for a subscription business — to illustrate how quality issues arise and how the framework deals with them.

Scenario Setup

Data Sources:

- Customer profile (demographics, signup date)

- Usage logs (events, timestamps)

- Billing history

- Support tickets

Pipeline Stages:

- Ingestion & staging

- Data cleaning & normalization

- Feature engineering

- Model training & validation

- Inference and monitoring

Data Quality Issues Encountered

- Ingestion: Nulls in usage logs (some events missing timestamps)

- Cleaning: Duplicate records, mismatched customer IDs

- Feature engineering: Outliers in billing (negative charges)

- Training: Class imbalance, mislabeled churn (some churned flagged as active)

- Inference: Data drift — new customers behave differently in usage patterns

Without a robust framework, the team spends weeks chasing errors — model accuracy is unstable, retraining happens often, and alerts come too late.

Framework-Based Resolution

1. Ingestion Stage Checks

- Schema enforcement: reject or quarantine records missing required fields

- Null thresholds: if > 5% missing in key fields, raise alert

2. Cleaning Stage Checks

- Duplicate detection (customer ID + timestamp)

- Referential checks (billing history references only valid customers)

3. Feature Engineering Checks

- Range constraints (e.g. invoice amounts must be ≥0)

- Statistical outlier detection (e.g. z-score > 3)

4. Training Stage Checks

- Class balance alerts

- Label consistency checks (cross-check churn labels vs. support tickets)

5. Inference / Monitoring Stage

- Drift detection (feature distribution divergence)

- Prediction consistency (e.g. extremely high probabilities flagged)

- Feedback loop: actual churn vs predicted churn errors

Over successive iterations, the framework helps the team refine thresholds, improve feature hygiene, and build more dependable models.

Lessons Learned

- The “fail-fast” approach saves time: detecting a data glitch early is far cheaper than after model training.

- Not all checks are equal: invest first where error risk is highest (billing, label consistency).

- Document everything — alerts, fixes, lineage. This becomes a knowledge base.

- Iteration is essential — thresholds, metrics, and rules will evolve.

See our blog on Enterprise AI Strategy in 2026.

Build Your Data Quality Framework With These Steps

To build a data quality framework for AI, follow these steps:

- Define governance, ownership, and data responsibilities

- Identify key data quality dimensions and metrics

- Audit and baseline current data quality issues

- Implement validation checks across pipelines

- Set up monitoring, alerting, and dashboards

- Create remediation workflows and feedback loops

- Continuously improve and scale the framework

Why AI Demands Strong Data Quality

AI requires strong data quality because machine learning models depend on accurate, consistent, and unbiased data for training and predictions. Poor data leads to model errors, bias, and unreliable outcomes, making data quality critical for scalable and trustworthy AI systems.

Data Quality Framework for AI Checklist

- Defined data ownership and governance roles

- Automated validation checks across pipelines

- Standardized data quality metrics (accuracy, completeness, consistency)

- Monitoring and alerting systems in place

- Data quality integrated into ML workflows

- Feedback loops for continuous improvement

Step 0: Preliminaries & Governance Setup

- Assign a data quality governance lead (or team).

- Define data ownership, stewardship, and responsibilities across domains.

- Align with your enterprise data governance framework and policies.

- Inventory all AI pipelines, data sources, transformations.

Step 1: Define Core Quality Dimensions & Metrics

Typical dimensions include:

- Completeness

- Accuracy

- Consistency

- Timeliness / latency

- Uniqueness / deduplication

- Validity / conformity

Map each dimension to your AI pipelines. Define key quality metrics (e.g. missing rate, outlier rate, drift score).

Step 2: Baseline & Audit

- Run an initial audit of your datasets to understand existing issues.

- Visualize distributions, nulls, duplicates, outliers.

- Prioritize the “hot spots” (data sources or transformations with highest defect rates).

Step 3: Build Validation Modules

- Create modular validators (schema, thresholds, range checks, drift)

- Integrate them at ingestion, transformation, feature stages.

- Use open-source or platform tools

Step 4: Orchestration & Alerting

- Use pipeline orchestration tools (Airflow, Kubeflow, Prefect).

- Attach quality checks as tasks before key pipeline stages.

- Configure alerting (Slack, email, dashboards) when a check fails.

- Decide “quarantine vs fail” policies (automated vs human review).

Step 5: Monitoring & Dashboards

- Build centralized dashboards tracking quality metrics across pipelines.

- Monitor trends (daily, weekly, monthly).

- Flag pipelines whose quality is deteriorating.

Step 6: Feedback Loop & Remediation

- Assign owners to investigate quality alerts.

- Classify common error types (missing data, schema change, drift).

- Build remediation playbooks (auto-fix, drop record, human review).

Step 7: Iterate, Extend & Mature

- Add semantic checks (consistency across datasets, cross-field logic).

- Expand to new pipelines/domains.

- Introduce machine learning–based anomaly detection.

- Conduct retrospective reviews and update thresholds.

- Align with evolving governance, auditing, compliance needs.

How to Iterate & Mature Your Framework

Once your baseline is in place, maturity comes from iteration:

1. Quarterly Quality Reviews

- Evaluate which checks catch most errors

- Retire ineffective rules

- Introduce new ones

2. Adaptive Thresholds / Dynamic Checks

- Instead of static bounds, use rolling windows or statistical alerting

- Use traffic-based benchmarks

3. Domain-Specific Checks and Metadata Awareness

- Understand semantics: e.g. “billing_date ≤ invoice_date”

- Cross-dataset reconciliation

4. Automated Repair Suggestions

- Auto-imputation, duplicate merge, or fallback values

- Human-in-the-loop for critical datasets

5. Quality Scorecards & SLAs

- Assign quality SLAs to data pipelines

- Include metrics in business reviews

6. Governance Integration & Audit Trails

- Version all data schemas, transformations, checks

- Link to lineage and metadata systems

- Compliance reporting snapshots

7. Fast Onboarding for New Pipelines

- Provide templates and boilerplate checks

- Make adoption low-friction

Over time, the framework evolves from “checklist” to “living engine” that proactively adapts to new data challenges.

For related context on managing AI and analytics.

Strategic Recommendations for Leaders

- Start small but think big: pilot with one domain, then scale.

- Secure executive buy-in by quantifying cost of bad data.

- Place the framework inside your AI center of excellence or centralized data quality team.

- Allocate resources not just for tooling but maintenance, analytics, and evolution.

- Foster a data quality culture: mandate reviews, reward good practices.

- Ensure integration with your broader data governance framework (policies, lineage, compliance).

- Monitor and iterate — a static framework dies rapidly.

Data & Stats Snapshot

- According to Gartner, poor data quality costs organizations an average of $12.9 million per year.

- One study found that 70–80% of analytics and AI project time is spent on data preparation and cleaning.

- In mature organizations, automated data validation reduces model retraining cycles by 30–50%.

- A survey by IDC showed that companies with strong data governance (of which data quality is a pillar) report 2X more revenue growth from AI investments.

These statistics highlight the business imperative for investing in a data quality framework as central to AI success.

Conclusion & Next Steps

The difference between AI projects that succeed and those that stumble often lies not in the model architecture, but in the data. For AI initiatives to deliver reliable, scalable, and trustworthy results, you need a data quality framework for AI at their core.

By establishing structured quality checks, governance, monitoring, and iterative feedback, your organization can:

- Boost model accuracy and robustness

- Speed up deployment cycles

- Reduce technical debt and operational costs

- Strengthen trust, compliance, and auditability

- Scale your AI footprint with confidence

- Continuously mature and adapt

Next steps:

- Use the checklist above to scope your pilot domain.

- Build minimal validation modules and integrate into one pipeline.

- Monitor quality metrics, refine rules, and prepare to scale.

- Share early wins with leadership and expand further.

If you’d like a data quality assessment, or want to explore a tailored framework implementation for your AI roadmap, drop me a line or explore our case studies and whitepapers below.

Q1: How is a data quality framework different from data governance?

A data quality framework focuses on validating, monitoring, and improving data accuracy, while data governance defines policies, ownership, and compliance. Both work together to ensure reliable data for AI systems.

Q2. What is the first step in building a data quality framework?

The first step is defining data ownership, governance policies, and key quality metrics aligned with business and AI objectives.

Q3. How many checks is too many?

It depends, but avoid rule bloat. Start with schema, nulls, range, duplicate, drift checks. Track which rules are effective; retire or refine underutilized ones.

Q4: Which tools or open-source libraries are recommended?

Great Expectations, Deequ (Amazon), TFX Data Validation, Apache Griffin, and built-in native cloud services (e.g., AWS Glue Data Quality). Use one that fits your stack and integrate it into your pipelines.

Q5: How do I handle false positives or alerts fatigue?

Use threshold calibration (tolerance levels)

Add “alert suppression window” or “grace periods”

Rank alerts by impact

Provide human review workflows

Continuously refine the rules

Related Reads

- Ultimate Guide to Optimizing Spark Workloads in Microsoft Fabric for Data Engineers

- Microsoft Fabric Architecture: CTO’s Guide to Modern Analytics & AI

- Data Governance for Data Quality: Future-Proofing Enterprise Data

- Data Quality for AI in 2026: Enterprise Guide

- Microsoft Fabric vs Power BI: Understanding the Difference

- Microsoft Fabric vs Snowflake: Data Management Showdown

- AI-Ready Enterprise Checklist for Microsoft Fabric Microsoft Fabric vs Power BI: Understanding the Difference

- Microsoft Fabric vs Snowflake: Data Management Showdown

- AI-Ready Enterprise Checklist for Microsoft Fabric