Every modern QA organization eventually hits the same invisible wall. Test automation scales. Release cycles shrink. CI pipelines grow longer and more expensive. What once felt like progress slowly turns into friction. Using AI to prioritize high-impact test cases ensures modern QA teams focus on real delivery risk instead of outdated test execution patterns.

The issue is not coverage. Most enterprise teams already have thousands of automated tests. The real challenge is deciding which tests matter most right now. In fast-moving CI/CD environments, static prioritization models collapse under the weight of constant change.

Using AI to prioritize high-impact test cases addresses this exact bottleneck. Instead of executing tests based on outdated labels or human intuition, AI evaluates real engineering signals—code changes, failure history, business criticality, and execution cost—to determine which tests deliver the highest risk reduction at any given moment.

This shift is not about replacing testers or running fewer tests. It is about making smarter quality decisions earlier in the delivery lifecycle. This blog on using AI to prioritize high-impact test cases explores why traditional approaches fail, how AI-based prioritization works in practice, and what enterprise teams must consider to adopt it successfully.

Related insight: For insight-driven QA acceleration, embed our AI-powered testing solutions into your development lifecycle.

TL;DR — Executive Summary

- Modern CI/CD pipelines slow down not because of too few tests, but because teams run too many low-impact tests too early.

- Traditional test prioritization (smoke, regression, P0/P1) fails in fast-changing, microservices-based systems due to static rules and human bias.

- Using AI to prioritize high-impact test cases enables data-driven decisions based on code changes, failure history, business criticality, and execution cost.

- AI-driven prioritization delivers faster feedback, earlier defect detection, lower infrastructure costs, and higher release confidence—without reducing test coverage.

- This shift represents a move from basic test automation to Test Intelligence, where quality decisions are adaptive, risk-based, and aligned with business outcomes.

The Testing Bottleneck Nobody Talks About

Test automation was supposed to eliminate bottlenecks. Instead, for many organizations, it quietly created a new one. The growing CI slowdown highlights why using AI to prioritize high-impact test cases is essential for sustaining fast, reliable enterprise delivery.

Automation suites grow sprint after sprint. Microservices multiply. Dependencies expand. Meanwhile, release expectations move from weekly to daily—or even continuous.

The result is predictable. CI pipelines slow down. Feedback arrives too late. Developers wait hours to learn whether a critical flow is broken. Teams either run everything and accept delays or run a subset based on gut feel and accept risk.

Neither approach works at scale. Running everything wastes time and infrastructure. Running selectively without intelligence introduces blind spots. The bottleneck is no longer execution capability—it is prioritization.

In modern delivery models, quality is no longer about whether defects are caught. It is about when they are caught. The earlier critical issues surface, the cheaper and safer they are to fix. That timing depends entirely on which tests run first.

Related insight: Read in our latest blog on how intelligent systems are reshaping sustainable business models and we help enterprises design future-ready, ESG-driven digital ecosystems.

The Traditional Way of Prioritizing Tests (And Why It Fails)

Most enterprises rely on familiar prioritization mechanisms: smoke tests, regression suites, P0/P1 labels, or environment-based execution rules. These systems appear structured, but they degrade quickly.

The fundamental problem is that traditional prioritization assumes stability. Labels are assigned once and rarely revisited. As code evolves, priorities do not. Static labels and manual tagging break down at scale, making using AI to prioritize high-impact test cases a necessity rather than an optimization.

Manual tagging does not scale beyond a few hundred tests. Once suites grow into the thousands, maintaining accurate priorities becomes a full-time task—one that competes directly with value-added QA work.

Human bias compounds the issue. Tests remain “critical” because they always have been, not because they reflect current risk. Microservices architectures make this even worse. A small backend change can silently impact multiple user journeys, yet static labels fail to capture those ripple effects.

Traditional prioritization answers the wrong question. It asks which tests should be important, not which tests are most likely to catch a meaningful defect today.

Related insight: Learn how Techment helps enterprises implement test automation successfully to save manual effort and time in our blog.

What High-Impact Really Means in Modern Testing

Before introducing AI, teams must redefine impact. High-impact test cases are not defined by length, complexity, or execution time. They are defined by risk reduction. Defining impact through risk and probability is exactly why using AI to prioritize high-impact test cases delivers better quality outcomes than coverage-based approaches.

A high-impact test case typically exercises frequently changing code paths, validates business-critical flows such as authentication or payments, and has a history of catching severe defects. These tests often fail shortly after related changes, making them powerful early-warning signals.

Impact is a function of relevance, probability, and consequence. A short API test validating a billing endpoint may be far more impactful than an end-to-end UI flow that rarely breaks. Without data-driven insight, these distinctions are easy to miss.

Using AI to prioritize high-impact test cases allows teams to operationalize this definition instead of relying on assumptions. Studies show that AI-driven test prioritization significantly enhances regression testing effectiveness, reduces execution time, and optimizes resource allocation compared to traditional approaches — validating empirical benefits of AI prioritization in testing.

Related insight: See how Techment’s AI Test Automation with Testim + Tricentis strengthens automation resilience.

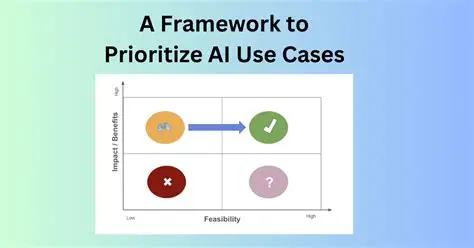

From Static Rules to Adaptive Intelligence

The shift to adaptive pipelines is powered by using AI to prioritize high-impact test cases based on real-time engineering and business signals.

Traditional systems depend on predefined rules: always run smoke tests first, execute regression nightly, trigger certain suites on certain branches. These rules cannot adapt to changing risk profiles.

AI-based prioritization continuously learns from engineering signals. It evaluates what changed, where defects historically occur, which flows matter most to the business, and how long tests take to run. Priorities are recalculated dynamically for every pipeline execution.

Importantly, AI does not replace human judgment. It augments it. Testers remain responsible for defining business criticality and validating outcomes. AI simply ensures that the most relevant tests surface first.

Related insight: Learn how AI-powered test automation accelerates mobile testing.

Core Signals AI Uses to Prioritize Test Cases

Signals like change impact and failure probability are what make using AI to prioritize high-impact test cases both precise and scalable.

Change impact analysis evaluates the scope of each commit—files, functions, APIs, and configurations—to identify areas of elevated risk.

Test-to-code mapping establishes traceability between tests and components. When a specific service changes, tests covering that service automatically gain priority.

Failure probability modeling learns from historical data. If certain tests frequently fail after similar changes, AI increases their priority in future runs.

Business criticality weighting ensures that revenue-impacting flows receive appropriate attention. A failed login test carries more weight than a cosmetic UI check.

Execution cost awareness balances value against time. Fast, high-signal tests rise to the top, while long-running or flaky tests are deprioritized when appropriate.

Together, these signals allow AI to recommend a ranked execution order that maximizes risk coverage within limited pipeline time.

Related insight: Read our blog that explores how AI copilots for enterprises are transforming executive leadership in 2026.

Why AI-Based Test Prioritization Matters for Enterprise Delivery

For enterprise organizations, the cost of delayed feedback is measured in more than minutes. It affects developer productivity, infrastructure spend, release confidence, and ultimately customer trust.

Using AI to prioritize high-impact test cases transforms test execution from a blunt instrument into a precision tool. It enables faster feedback loops without sacrificing quality, allowing teams to scale automation sustainably.

This is not about running fewer tests. It is about running the right tests first—every time.

Related reading: Enterprise AI Strategy in 2026: A Practical Guide for CIOs and Data Leaders

How AI-Based Test Prioritization Works in a Real CI/CD Pipeline

In CI/CD environments, using AI to prioritize high-impact test cases enables faster feedback without sacrificing regression confidence.

The flow typically begins when a developer commits code. Instead of triggering a fixed set of test suites, the pipeline first evaluates the scope and nature of the change. Files modified, APIs touched, dependencies affected, and configuration changes are analyzed to establish a preliminary risk profile.

Next, the prioritization engine maps those changes against historical test data. Tests that previously failed after similar changes are elevated. Tests mapped to impacted components gain weight. Business-critical flows receive additional emphasis.

The output is not a binary decision of run-or-skip. It is a ranked execution order. High-impact tests execute first, delivering fast feedback to developers. Medium-impact tests follow. Low-impact or high-cost tests can be deferred to later stages or nightly runs.

This sequencing allows teams to preserve comprehensive coverage without forcing every pipeline to wait for full regression. The result is faster signal, not reduced rigor.

Related insight: Data Quality for AI: The Ultimate 2026 Blueprint for Trustworthy & High-Performing Enterprise AI

Measurable Benefits Of AI-Based Test Prioritization Enterprises Actually See

Reduced pipeline time and earlier defect detection are direct results of using AI to prioritize high-impact test cases intelligently.

Faster pipelines and higher throughput are often the most immediate gains. By front-loading high-impact tests, teams reduce time to first failure and shorten feedback loops. This accelerates merges and increases delivery velocity without compromising quality.

Earlier detection of critical defects changes the economics of quality. Bugs that would previously surface hours later in full regression are now caught minutes after commit, when context is fresh and fixes are cheaper.

Lower infrastructure costs follow naturally. Running fewer long, low-signal tests on every commit reduces compute consumption and parallel runner requirements.

Improved release confidence emerges over time. When teams consistently catch high-risk issues early, deployments become routine instead of stressful.

Across industries, organizations report 30–60% reductions in execution time while maintaining or improving defect detection effectiveness.

Related reading: What a Microsoft Data and AI Partner Brings to Your Data Strategy

Common Myths and Legitimate Concerns Of AI-Based Test Prioritization

Many concerns fade once teams experience how using AI to prioritize high-impact test cases enhances—not replaces—human judgment.

“AI will miss important tests.” In reality, prioritization does not eliminate coverage. Baseline smoke suites can always run. AI simply determines optimal sequencing beyond that foundation.

“This only works at massive scale.” While value increases with data volume, teams with a few hundred tests already benefit from failure pattern analysis and change impact insights.

“Our historical data isn’t clean enough.” AI models improve incrementally. Imperfect data still produces better recommendations than static labels.

“This replaces testers.” The opposite is true. By removing execution waste, testers gain time for exploratory testing, risk analysis, and test design—activities where human judgment is irreplaceable.

Related Reading: Is Your Enterprise AI-Ready? A Fabric-Focused Readiness Checklist

Where Enterprises Should Start For AI-Based Test Prioritization

A phased adoption approach proves that using AI to prioritize high-impact test cases can deliver value without disrupting existing pipelines.

Begin by centralizing execution data. Consolidate CI results, even if initially basic. Visibility is a prerequisite for intelligence.

Next, improve failure classification. Distinguish flaky failures from genuine defects. Capture impacted areas and severity where possible.

Introduce AI in recommendation mode. Allow the system to suggest priorities while humans validate outcomes. This builds trust without risk.

Integrate prioritization with existing CI/CD tooling. Adoption should reduce friction, not introduce new complexity.

Finally, measure outcomes that matter: time to feedback, defects caught early, rerun rates, and reduction in full-suite executions.

Related reading: Leveraging Data Transformation for Modern Analytics

From Test Automation to Test Intelligence

The evolution toward Test Intelligence is fundamentally driven by using AI to prioritize high-impact test cases instead of running everything blindly.

As systems grow more distributed and release cycles accelerate, quality decisions must become predictive rather than reactive. Test intelligence focuses on risk, probability, and impact—not just coverage metrics.

Using AI to prioritize high-impact test cases is foundational to this shift. It enables adaptive pipelines that respond to change instead of assuming stability.

Related reading: Data Governance for Data Quality: Future-Proofing Enterprise Data

How Techment Helps Enterprises

Techment enables enterprises to operationalize using AI to prioritize high-impact test cases through data-driven quality engineering and AI readiness.

Through AI-driven quality strategies, Techment helps organizations design prioritization models aligned with business risk and delivery goals. This includes integrating test intelligence into existing CI/CD ecosystems without disrupting workflows.

Techment’s expertise in data engineering and AI readiness ensures that test execution data is structured, reliable, and actionable—an essential foundation for effective prioritization.

By combining platform engineering, governance, and advanced analytics, Techment enables enterprises to operationalize AI responsibly while improving speed, quality, and confidence across the delivery lifecycle.

Related reading: Best Practices for Generative AI Implementation in Business

Conclusion

The challenge in modern QA is no longer test creation. It is test selection.

Using AI to prioritize high-impact test cases allows enterprises to align quality decisions with real risk, accelerate feedback, and scale delivery without sacrificing confidence.

For organizations committed to continuous delivery, intelligent prioritization is not optional. It is the next logical step in quality engineering.

Related Reading: Unified Data Platform in 2026: How It Works, Why It Matters, and How Microsoft Fabric Enables It

FAQ

1. What does “high-impact” mean in AI-based test prioritization?

High-impact test cases are those most likely to catch business-critical defects early. They typically cover frequently changing code paths, revenue-impacting flows (login, payments, subscriptions), and historically defect-prone areas—not simply the longest or most comprehensive tests.

2. Will AI-based test prioritization skip important tests?

No. AI prioritization focuses on execution order, not elimination. Teams can still run baseline smoke tests on every build and full regression suites nightly. AI ensures the most valuable tests run first when time is limited.

3. How much historical data is required for AI prioritization to work?

AI improves incrementally. While richer historical data increases accuracy, even imperfect or limited data can produce better prioritization than static labels. Value typically grows within weeks as more execution data is collected.

4. Is AI-based test prioritization only useful for large enterprises?

No. While large enterprises see significant gains, mid-sized teams with a few hundred automated tests often benefit quickly—especially when CI pipelines begin to slow and release frequency increases.

5. Does AI-based prioritization replace QA engineers or testers?

Absolutely not. AI replaces manual prioritization and execution waste, not human expertise. Testers gain more time for risk analysis, exploratory testing, and improving test design—areas where human judgment is essential.