RAG best practices help organizations build reliable AI assistants by combining large language models with enterprise knowledge retrieval systems. Key practices include curated data sources, automated refresh pipelines, evaluation frameworks, prompt optimization, and security controls to ensure accurate and trustworthy AI responses.

Enterprise interest in generative AI has grown rapidly over the past two years. Organizations across industries are exploring how large language models (LLMs) can power intelligent assistants, automate support workflows, accelerate engineering productivity, and unlock insights from internal knowledge bases.

However, deploying these systems reliably at scale remains challenging.

Industry surveys consistently show that more than 80% of generative AI initiatives never move beyond proof-of-concept stages. Many projects demonstrate impressive early prototypes but struggle to deliver production-grade reliability, governance, and security.

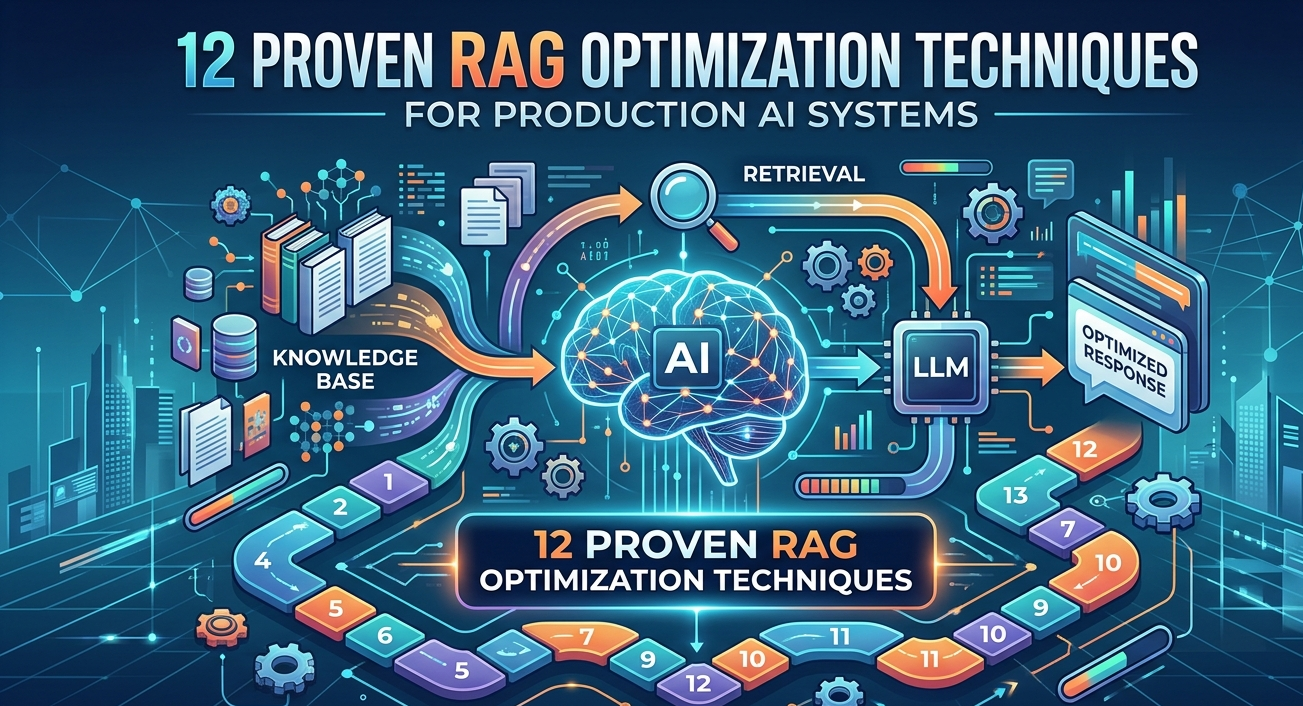

One of the most effective architectural approaches for enterprise AI systems is Retrieval Augmented Generation (RAG).

RAG enhances large language models by allowing them to retrieve relevant context from enterprise knowledge sources before generating responses. Instead of relying solely on pre-trained model knowledge, RAG systems dynamically retrieve information from internal documentation, product knowledge bases, or operational datasets.

This dramatically improves:

- answer accuracy

- factual grounding

- enterprise domain relevance

- transparency and explainability

For organizations building AI assistants, copilots, or knowledge management systems, implementing RAG best practices is critical for moving from experimentation to production.

This article explores the lessons learned from large-scale deployments of enterprise RAG systems. It covers:

- how to curate enterprise knowledge sources

- how to design automated refresh pipelines

- how to evaluate system performance

- how to optimize prompt strategies

- how to implement enterprise-grade security

By following these RAG best practices, organizations can transform generative AI prototypes into scalable enterprise AI platforms.

TL;DR

- RAG best practices are essential for building reliable enterprise AI assistants.

- Most generative AI initiatives fail because RAG systems remain stuck in proof-of-concept stages.

- Production-ready RAG platforms require data curation, automated refresh pipelines, evaluation frameworks, and strong security controls.

- Enterprises should design retrieval pipelines, prompt strategies, and governance frameworks from the beginning.

- Successful organizations treat RAG as core enterprise infrastructure rather than experimental AI projects.

What Are RAG Best Practices?

RAG best practices refer to architectural and operational guidelines used to build reliable Retrieval Augmented Generation (RAG) systems for enterprise AI applications.

These practices ensure that AI assistants provide accurate, grounded, and secure responses by retrieving trusted knowledge before generating answers.

The most important RAG best practices include:

- Curating authoritative knowledge sources

- Implementing automated data refresh pipelines

- Designing robust evaluation frameworks

- Optimizing prompt strategies for grounded responses

- Applying enterprise security controls

- Using hybrid retrieval and reranking models

- Continuously monitoring system performance

Organizations that implement these RAG best practices can transform generative AI prototypes into scalable enterprise knowledge systems.

What Is Retrieval Augmented Generation (RAG)?

Retrieval Augmented Generation (RAG) is an AI architecture that combines large language models with external knowledge retrieval systems. Instead of relying only on training data, RAG retrieves relevant documents from a knowledge base and uses them as context to generate accurate answers.

This architecture significantly reduces hallucinations and enables AI systems to deliver domain-specific enterprise knowledge responses.

As explored in internal AI strategy and road-mapping, improving retrieval precision directly improves generation reliability.

Understanding Retrieval Augmented Generation Architecture

Retrieval Augmented Generation combines information retrieval systems with large language models to create more reliable AI systems.

Instead of relying only on pre-training data, RAG systems dynamically fetch relevant information before generating answers.

How RAG Works

At a high level, a RAG system performs four core steps:

- Ingest enterprise knowledge sources

- Convert documents into vector embeddings

- Retrieve relevant content based on user queries

- Generate grounded responses using LLMs

This architecture ensures that responses are based on real enterprise knowledge rather than model assumptions.

For organizations building AI roadmaps, see: Enterprise AI Strategy in 2026

Core Components of a RAG Architecture

Enterprise RAG systems typically include several layers.

Knowledge ingestion layer

This pipeline collects data from:

- documentation portals

- knowledge bases

- product manuals

- support tickets

- internal wiki platforms

Embedding and indexing layer

Documents are converted into vector embeddings and stored in vector databases such as:

- Pinecone

- Weaviate

- FAISS

- Azure AI Search

Retrieval layer

When a user asks a question, the system performs semantic search across embeddings to retrieve relevant documents.

Generation layer

The LLM receives retrieved context and generates responses grounded in enterprise knowledge.

Read our guide on 10 Effective Steps To Building RAG Applications: From Prototype to Production-Grade Enterprise Systems that provides a step-by-step enterprise roadmap for building RAG applications.

Why Enterprises Prefer RAG Over Model Fine-Tuning

Many organizations initially consider fine-tuning large language models to embed company knowledge.

However, RAG offers several advantages:

- Faster knowledge updates

- Knowledge bases can be updated without retraining models.

- Lower cost

- Fine-tuning large models requires expensive training infrastructure.

- Improved explainability

- RAG responses can cite original knowledge sources.

- Reduced hallucinations

Models rely on retrieved context rather than guessing answers.

For enterprises managing rapidly changing knowledge bases—such as product documentation or support systems—RAG architectures are often the most practical approach.

RAG Best Practice #1: Carefully Curate Enterprise Knowledge Sources

One of the most important RAG best practices is ensuring that the system retrieves information from high-quality knowledge sources.

Poor data quality leads directly to poor AI responses.

The “Garbage In, Garbage Out” Problem

Many organizations make a common mistake when implementing RAG systems.

They attempt to ingest every available data source into their knowledge index.

This often includes:

- historical support tickets

- unverified forum posts

- outdated documentation

- internal Slack conversations

While this approach increases the size of the knowledge base, it frequently reduces answer accuracy.

Large language models can retrieve outdated or incorrect information, producing confusing responses.

Start with Authoritative Knowledge Sources

Successful enterprise RAG implementations begin with curated primary sources.

Typical authoritative sources include:

Technical documentation

API references

Product release notes

Verified knowledge base articles

Official troubleshooting guides

These sources provide high-quality information with minimal ambiguity.

Expand Gradually to Secondary Sources

Once primary documentation is indexed, organizations can expand to secondary knowledge sources.

Examples include:

developer community forums

support ticket resolutions

engineering discussions

However, enterprises should apply strict filtering policies.

Common filters include:

- Recency filtering

- Include only recent content to avoid outdated guidance.

- Authority filtering

- Prioritize verified contributors or expert responses.

- Relevance filtering

Exclude conversations unrelated to product usage or troubleshooting.

Internal vs External Knowledge Stores

Another important RAG best practice involves separating public and private knowledge sources.

Enterprises should maintain distinct vector databases for:

Public knowledge

- documentation

- help center content

- developer guides

Private enterprise knowledge

- internal engineering documentation

- customer case information

- proprietary data

This separation improves both security and governance.

Organizations implementing enterprise AI assistants often integrate RAG architectures with broader data governance frameworks such as those discussed in Techment’s analysis of enterprise data strategies.

RAG Best Practice #2: Build Automated Knowledge Refresh Pipelines

Enterprise knowledge bases evolve constantly.

Product documentation changes frequently, APIs evolve, and operational processes are updated regularly.

If RAG systems do not keep pace with these changes, they quickly become outdated.

The Risk of Static Knowledge Bases

Many organizations treat RAG ingestion as a one-time process.

After initial indexing, the knowledge base is rarely updated.

This leads to several problems:

- AI assistants provide outdated guidance.

- Responses reference deprecated product features.

- Conflicting answers appear when documentation evolves.

- Over time, trust in the AI system erodes.

Continuous Knowledge Refresh

Production RAG systems require automated refresh pipelines. These pipelines monitor knowledge sources and update vector indexes automatically. However, reindexing entire knowledge bases continuously can be expensive. Instead, successful implementations rely on incremental update strategies.

Delta Processing Approach

A practical approach involves detecting only changes in content.

This approach resembles Git’s diff mechanism.

Key steps include:

- Detect content updates

- Validate document structure

- Re-embed modified sections

- Update vector indexes

This approach minimizes compute costs while ensuring knowledge freshness.

Key Components of a Refresh Pipeline

Production pipelines typically include several components.

Change detection – Systems monitor documentation repositories or CMS updates.

Validation layer – Structural validation ensures documents maintain expected formatting.

Incremental indexing – Only modified content is embedded and indexed.

Monitoring systems – Quality metrics detect knowledge degradation.

Why Refresh Pipelines Are Critical for Enterprise AI

Automated refresh pipelines provide several benefits.

Improved answer accuracy – AI responses reflect the latest documentation.

Lower operational overhead – Engineers do not need to manually rebuild indexes.

Faster product iteration – Documentation updates immediately propagate to AI assistants.

This capability is one reason RAG architectures are often preferred over fine-tuning models.

Instead of retraining models every time knowledge changes, organizations can simply update the retrieval layer.

RAG Best Practice #3: Build Robust Evaluation Frameworks

Many organizations underestimate the importance of systematic evaluation frameworks.

During early experiments, teams often test RAG systems informally by asking sample questions.

While this approach works for prototypes, it fails for production deployments.

Why RAG Evaluation Is Complex

RAG systems involve multiple interacting components.

These include:

- chunk size configuration

- embedding models

- retrieval algorithms

- context windows

- prompt strategies

Each parameter affects system performance.

Without rigorous evaluation frameworks, it becomes difficult to identify improvements.

Modern RAG Evaluation Techniques

Several tools and methodologies have emerged for evaluating RAG systems.

Open-source frameworks such as Ragas provide metrics for:

- answer correctness

- context relevance

- hallucination detection

However, enterprises often need customized evaluation pipelines tailored to their domain.

Core Evaluation Metrics

Effective RAG evaluation frameworks measure several dimensions.

Query understanding – Does the system correctly interpret the user’s intent?

Retrieval accuracy – Does the system retrieve relevant documents?

Answer grounding – Are responses supported by cited sources?

Hallucination detection – Does the system generate unsupported claims?

Why Evaluation Frameworks Matter

Evaluation frameworks provide several critical benefits.

They allow organizations to:

- systematically test architecture changes

- measure improvements objectively

- prevent regressions during updates

Without evaluation frameworks, RAG optimization becomes guesswork.

Successful enterprise deployments treat evaluation pipelines as core components of AI infrastructure.

RAG Best Practice #4: Optimize Prompting Strategies for Enterprise Use Cases

Prompt engineering is one of the most overlooked components of production-ready RAG systems. Many teams focus heavily on embeddings, vector databases, and retrieval logic, but underestimate the importance of how prompts guide large language models to generate responses.

In reality, prompting strategy acts as the control layer of a RAG architecture.

It determines how retrieved knowledge is interpreted, synthesized, and presented to users.

Ground Every Response in Retrieved Context

The primary goal of RAG is to reduce hallucinations by grounding responses in trusted knowledge sources.

Prompt templates should explicitly instruct models to:

- Use only retrieved context when answering questions

- Provide citations for referenced information

- Avoid generating unsupported claims

For enterprise deployments, this grounding strategy improves both answer accuracy and auditability.

When users can trace responses back to source documents, trust in AI systems increases significantly.

Teach the Model to Say “I Don’t Know”

One of the most important principles of enterprise RAG best practices is acknowledging system limitations.

AI assistants should recognize when retrieved context does not contain enough information to answer a question.

Prompt instructions should require the model to:

- Indicate when insufficient data is available

- Suggest relevant documentation links

- Avoid guessing or hallucinating responses

This approach prevents misleading outputs and improves the credibility of enterprise AI systems.

Maintain Domain Boundaries

Production RAG systems should operate within clearly defined knowledge domains.

For example, an internal AI assistant trained on product documentation should not attempt to answer unrelated questions about external tools or competitors.

Prompt instructions should therefore enforce domain constraints by directing the model to:

- Focus exclusively on retrieved knowledge sources

- Reject unrelated queries politely

- Maintain consistent response formatting

Handle Multiple Knowledge Sources

Enterprise knowledge bases often contain overlapping or conflicting information.

For example, different versions of product documentation may describe slightly different behaviors.

Prompting strategies should help models synthesize information across sources by:

- highlighting version differences

- referencing multiple citations when necessary

- explaining inconsistencies clearly

RAG Best Practice #5: Implement Enterprise Security Controls

Security considerations are critical for production RAG systems. Unlike traditional search systems, RAG architectures involve generative models that interact directly with enterprise knowledge bases.

Without appropriate safeguards, these systems can expose sensitive information.

Prompt Injection and Hijacking Risks

One of the most well-known threats to AI systems is prompt injection.

In prompt injection attacks, malicious users craft inputs designed to manipulate system behavior.

For example, an attacker might attempt to override prompt instructions or extract sensitive information from internal documents.

Defending against prompt injection requires multiple layers of protection.

These include:

- strict prompt templates

- system-level guardrails

- input sanitization mechanisms

Enterprises should also test AI assistants against adversarial queries before production deployment.

Protecting Sensitive Information

RAG systems frequently process user queries containing sensitive information.

Examples include:

- API keys

- internal error logs

- customer contact details

- confidential documentation

Once this information enters a generative model pipeline, it becomes difficult to guarantee complete removal.

For this reason, organizations should implement automated PII detection and masking mechanisms.

These systems scan incoming queries and redact sensitive data before processing.

Rate Limiting and Bot Protection

Public-facing AI assistants often attract automated scraping or abuse attempts.

Without proper protections, attackers can generate thousands of requests per minute.

This can lead to:

- increased infrastructure costs

- extraction of sensitive knowledge base information

- degraded system performance

Enterprise RAG systems should therefore include:

- rate limiting

- bot detection mechanisms

- request validation layers

Cloud security platforms increasingly offer specialized protections for AI workloads.

Role-Based Access Controls

Another important security principle is controlling access to knowledge sources.

Different users should have different visibility levels within the AI system.

For example:

Engineering teams may access internal technical documentation.

Customer support teams may access support knowledge bases.

External users may access only public documentation.

Role-based access control (RBAC) ensures that retrieval systems query only the data sources authorized for each user.

RAG Architecture Patterns for Production AI Systems

As organizations mature their AI strategies, RAG implementations evolve beyond simple retrieval pipelines.

Modern enterprise architectures incorporate multiple optimization layers to improve performance and reliability.

Hybrid Search Architectures

Basic RAG systems rely solely on vector similarity search.

However, hybrid architectures combine multiple retrieval techniques.

Common approaches include:

semantic vector search

keyword-based search

metadata filtering

Combining these methods improves retrieval accuracy across diverse query types.

Reranking Models

Advanced RAG pipelines often include reranking models.

These models evaluate retrieved documents and reorder them based on relevance.

Reranking significantly improves answer quality because LLMs receive more relevant context.

Query Decomposition

Some enterprise systems break complex queries into smaller components before retrieval.

This technique, known as query decomposition, helps AI systems handle multi-step questions more effectively.

For example, a user might ask:

“Which API version introduced authentication changes, and how does it affect rate limits?”

Query decomposition splits this question into multiple retrieval tasks.

The system then synthesizes answers from each component

Implementation Roadmap for Enterprise RAG Systems

Organizations planning to deploy RAG solutions should follow a structured implementation roadmap.

Phase 1: Define High-Value Use Cases

Successful AI deployments begin with clear business objectives.

Common enterprise RAG use cases include:

developer documentation assistants

customer support AI agents

internal knowledge search systems

sales enablement copilots

Selecting one or two well-defined use cases helps teams focus their architecture.

Phase 2: Build the Knowledge Infrastructure

The next step involves building the knowledge ingestion pipeline.

Key tasks include:

- identifying authoritative data sources

- building document ingestion workflows

- implementing embedding pipelines

- deploying vector databases

During this stage, teams should prioritize data quality over volume.

Phase 3: Deploy Retrieval and Generation Pipelines

Once knowledge is indexed, organizations can implement the core RAG workflow.

This includes:

retrieval algorithms

prompt templates

context formatting

LLM integration

Testing should focus on realistic enterprise queries.

Phase 4: Implement Evaluation Frameworks

Before launching the system, teams must establish evaluation pipelines.

These pipelines measure:

retrieval accuracy

response correctness

hallucination rates

user satisfaction

Continuous evaluation enables ongoing optimization.

Phase 5: Scale and Optimize

Once initial deployments succeed, organizations can expand RAG platforms to additional use cases.

Scaling strategies may include:

multi-tenant architectures

automated refresh pipelines

advanced retrieval techniques

integration with enterprise applications

How Techment Helps Enterprises Build Scalable RAG Platforms

Implementing production-ready RAG systems requires expertise across multiple domains, including data engineering, machine learning infrastructure, AI governance, and enterprise architecture.

Techment partners with organizations to design and deploy scalable AI platforms built on modern retrieval architectures.

Enterprise AI Strategy and Architecture

Techment helps organizations define AI strategies aligned with business objectives.

This includes identifying high-value RAG use cases and designing scalable architectures that integrate with existing enterprise systems.

Organizations exploring AI-driven knowledge systems often begin with data strategy frameworks similar to those outlined in Techment’s enterprise AI insights.

Knowledge Engineering and Data Pipelines

Building reliable RAG systems requires robust knowledge ingestion pipelines.

Techment supports enterprises in:

- curating authoritative knowledge sources

- implementing automated refresh pipelines

- optimizing vector indexing strategies

These pipelines ensure that enterprise AI assistants operate on accurate and up-to-date knowledge.

AI Governance and Security

Enterprise AI systems must meet strict governance and security standards.

Techment helps organizations implement:

- role-based access controls

- data lineage tracking

- AI governance frameworks

These safeguards protect sensitive enterprise information while enabling AI innovation.

Scalable AI Platform Implementation

Techment delivers end-to-end implementation support, including:

- RAG architecture design

- AI infrastructure deployment

- integration with enterprise applications

- performance optimization

Through these services, Techment enables organizations to move beyond experimental AI prototypes and deploy production-grade AI assistants.

Conclusion

Retrieval Augmented Generation has quickly become one of the most effective architectures for building enterprise AI assistants.

By combining large language models with enterprise knowledge bases, RAG systems deliver more accurate, reliable, and transparent responses than standalone generative AI models.

However, building production-ready systems requires careful planning and disciplined engineering practices.

Organizations that successfully deploy RAG platforms consistently follow several key principles:

- curate authoritative knowledge sources

- implement automated refresh pipelines

- build rigorous evaluation frameworks

- design robust prompt strategies

- enforce strong security and governance controls

When these RAG best practices are implemented together, enterprises can transform generative AI from experimental prototypes into mission-critical knowledge systems.

As generative AI continues to evolve, RAG architectures will likely remain a foundational component of enterprise AI strategies.

Organizations that invest in scalable retrieval infrastructures today will be well positioned to unlock the full potential of AI-powered knowledge platforms in the years ahead.

FAQs: Enterprise RAG Implementation

1. What is Retrieval Augmented Generation?

Retrieval Augmented Generation is an AI architecture that combines large language models with information retrieval systems to generate responses grounded in external knowledge sources.

2. Why are RAG systems important for enterprises?

RAG systems improve answer accuracy, reduce hallucinations, and enable AI assistants to access real-time enterprise knowledge.

3. How does RAG differ from model fine-tuning?

Fine-tuning embeds knowledge directly into a model through training. RAG retrieves information dynamically from knowledge sources without retraining the model.

4. What are the biggest challenges in RAG implementation?

Common challenges include data quality management, retrieval accuracy, evaluation frameworks, and securing sensitive knowledge bases.

5. How long does it take to deploy an enterprise RAG system?

Initial implementations can be deployed within a few months, but enterprise-scale platforms often evolve through iterative improvements over time.