Introduction

The introduction of Artificial Intelligence into software testing has already transformed how enterprises design, execute, and scale quality engineering practices. Early AI implementations focused heavily on accelerating automation—primarily through script generation—helping teams reduce manual coding effort and improve testing velocity.

However, this approach only addressed a fraction of the broader testing lifecycle.

Today, a more profound shift is underway: Agentic AI in Testing. This emerging paradigm redefines AI from a passive assistant into an active, autonomous participant capable of orchestrating the entire testing lifecycle. Instead of generating isolated test scripts, AI systems now plan, execute, adapt, and continuously optimize testing strategies with minimal human intervention.

This transition is not incremental—it is transformational.

For enterprise QA leaders, this means rethinking testing from a task-driven activity to a goal-driven, intelligent system. Agentic AI introduces capabilities that resemble a virtual QA engineer—one that understands requirements, identifies risks, executes tests, diagnoses failures, and evolves over time.

In this blog, we will explore how Agentic AI in Testing moves beyond script generation to full orchestration, its architectural implications, business impact, challenges, and what it means for the future of enterprise quality engineering.

TL;DR Summary

- Agentic AI in Testing moves beyond script generation to full lifecycle orchestration

- Enables autonomous planning, execution, debugging, and optimization

- Reduces manual QA effort while improving coverage and speed

- Transforms QA engineers into strategic quality architects

- Introduces governance, trust, and integration challenges for enterprises

The Evolution of AI in Testing: Why Agentic AI Matters Now

From Automation Scripts to Intelligent Systems

Traditional test automation frameworks were built on deterministic logic. Scripts followed predefined steps, and any change in application behavior often required manual updates. While AI-assisted tools improved script creation, they still operated within a human-defined boundary.

Agentic AI in Testing breaks this limitation.

Instead of relying on explicit instructions, agentic systems operate with intent and objectives. They dynamically determine how to achieve testing goals based on system behavior, historical data, and contextual insights.

This aligns with broader enterprise AI trends highlighted in strategic frameworks like AI-first operating models, where systems are expected to act, not just assist.

Why Enterprises Are Moving Toward Orchestration

Several macro forces are accelerating the adoption of Agentic AI:

- Complex application ecosystems (microservices, APIs, cloud-native architectures)

- Continuous delivery expectations requiring rapid validation cycles

- Explosion of test scenarios across devices, platforms, and user journeys

- Cost pressures on QA teams to deliver more with fewer resources

According to industry benchmarks (Gartner, IDC), enterprises are shifting toward autonomous operations models, where AI reduces manual intervention across IT functions—including testing.

Agentic AI in Testing fits directly into this transformation.

Strategic Implication for CTOs and QA Leaders

Testing is no longer a bottleneck—it is becoming a competitive differentiator.

Organizations that adopt AI-driven orchestration can:

- Release faster with higher confidence

- Detect issues earlier in the lifecycle

- Reduce operational overhead

- Improve customer experience

For deeper insights into enterprise data and AI strategy foundations, explore: Enterprise AI strategy in 2026.

Understanding Agentic AI in Testing

What Is Agentic AI?

Agentic AI refers to systems capable of:

- Making decisions independently

- Executing multi-step tasks

- Adapting based on outcomes

- Learning continuously

Unlike traditional AI tools that require prompts, agentic systems operate with defined goals and determine the steps needed to achieve them.

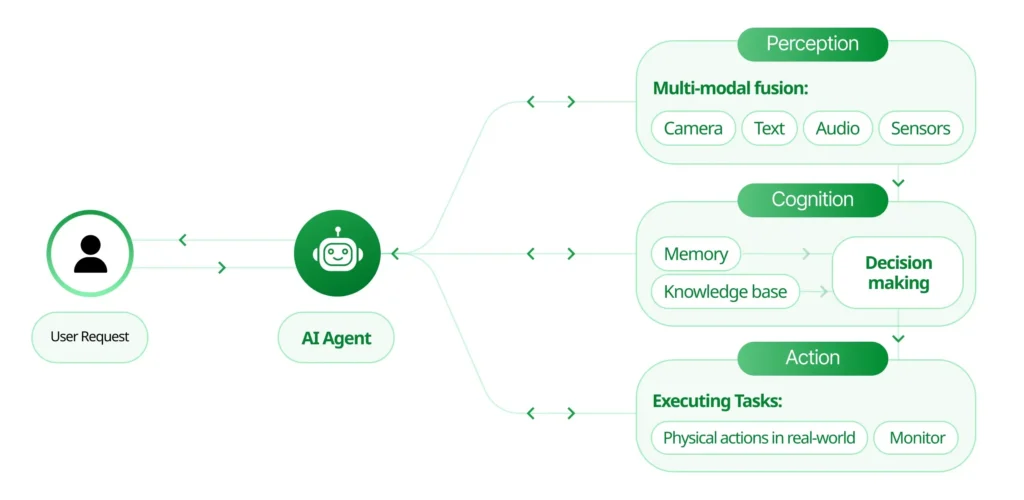

How Agentic AI Works in Testing

In the context of software testing, Agentic AI in Testing behaves like a virtual QA engineer.

It can:

- Understand application requirements

- Identify test scenarios

- Generate and execute tests

- Monitor outcomes

- Debug failures

- Improve test strategies over time

This creates a closed-loop intelligent system rather than a fragmented testing process.

Core Capabilities of Agentic AI in Testing

Goal-Oriented Planning

Instead of executing predefined scripts, AI identifies what needs to be tested based on risk and impact.

Autonomous Execution

Tests are executed dynamically across environments without manual scheduling.

Context Awareness

AI understands system dependencies, APIs, and user flows.

Continuous Learning

Each execution cycle improves future testing decisions.

Enterprise Perspective

Agentic AI is not just a tooling upgrade—it represents a shift in operating model.

Organizations must move from:

- Test case management → Test intelligence systems

- Manual orchestration → AI-driven orchestration

- Reactive debugging → Predictive quality engineering

For organizations building strong data foundations to enable such AI systems, refer to: Data Quality For AI in 2026

The Limitations of Traditional AI Test Generation

The Illusion of Progress

Many AI-powered testing tools today focus on script generation—automatically creating test scripts from UI interactions or requirements.

While this reduces initial effort, it creates a false sense of maturity.

Script generation addresses only a single stage in the testing lifecycle.

Key Challenges in Current AI Testing Approaches

1. Persistent Maintenance Overhead

Generated scripts still break when:

- UI elements change

- APIs evolve

- Workflows are updated

This leads to ongoing maintenance burdens.

2. Incomplete Test Coverage

AI-generated scripts often focus on:

- Happy paths

- Visible UI interactions

They miss:

- Edge cases

- Integration failures

- Performance scenarios

3. Manual Failure Analysis

When tests fail:

- Logs must be analyzed manually

- Root causes are unclear

- Debugging becomes time-consuming

4. Static Execution Models

Test execution remains:

- Scheduled

- Environment-dependent

- Non-adaptive

There is no intelligence in deciding what to test next.

5. Fragmented Toolchains

Testing ecosystems often involve:

- Multiple tools

- Manual integrations

- Disconnected workflows

Why This Model Does Not Scale

As systems grow more complex, script-based approaches become:

- Fragile

- Costly

- Inefficient

This is where Agentic AI in Testing introduces a paradigm shift—from automation to orchestration.

For insights into scalable enterprise architectures supporting such transformations, see: Microsoft Fabric Architecture CTO’s guide to Modern Analytics and AI

From Script Generation to Full Test Orchestration

The Shift to Intelligent Orchestration

Agentic AI transforms testing into a self-managing system.

Instead of isolated tasks, AI coordinates the entire lifecycle—from planning to optimization.

1. Intelligent Test Planning

Agentic AI analyzes:

- Requirements

- Code changes

- Historical defects

It identifies:

- High-risk areas

- Critical user journeys

- Priority test scenarios

This enables risk-based testing at scale.

2. Autonomous Test Creation

AI generates:

- UI tests

- API tests

- Integration tests

These are not static scripts—they are adaptive test models.

3. Smart Test Execution

Execution becomes dynamic:

- Tests are prioritized based on impact

- Environments are selected automatically

- Execution timing is optimized

4. Self-Healing Automation

One of the most impactful capabilities:

- AI detects UI changes

- Updates selectors automatically

- Prevents test failures due to minor changes

5. Intelligent Failure Analysis

Agentic AI correlates:

- Logs

- Network calls

- System metrics

It identifies root causes, not just symptoms.

6. Continuous Learning

The system improves over time by:

- Learning from past failures

- Expanding coverage

- Refining prioritization

Strategic Outcome

Testing evolves into:

- A self-optimizing system

- A continuous intelligence loop

- A business-aligned quality function

Business Impact of Agentic AI in Testing

or enterprises modernizing analytics and AI ecosystems to support such orchestration, explore: Microsoft Fabric AI solutions

Faster Release Cycles

By automating planning, execution, and debugging:

- Testing cycles shrink dramatically

- CI/CD pipelines accelerate

- Time-to-market improves

Reduced Maintenance Costs

Self-healing and adaptive systems eliminate:

- Script rewrites

- Manual updates

- Redundant testing

Improved Test Coverage

Agentic AI ensures:

Enhanced Insights

AI provides:

- Root cause analysis

- Predictive defect trends

- Quality metrics aligned with business KPIs

Higher Team Productivity

QA teams shift focus to:

- Strategy

- Architecture

- Governance

Enterprise Value

The impact extends beyond QA:

- Improved customer experience

- Reduced production defects

- Lower operational costs

- Stronger compliance

Architecture of Agentic AI in Testing: A Deep Dive

Building Blocks of an Autonomous QA System

Agentic AI in Testing is not a single tool—it is an ecosystem of interconnected capabilities that work together to deliver full lifecycle orchestration. For enterprise adoption, understanding this architecture is critical.

At a high level, an agentic testing system consists of five core layers:

1. Data & Context Layer

This layer provides the foundation of intelligence.

It includes:

- Application requirements

- User behavior data

- Historical defect logs

- System telemetry

- Test execution history

Without high-quality, governed data, Agentic AI cannot make reliable decisions.

This reinforces the importance of strong data governance and quality frameworks, as outlined in: Data Governance for Data Quality.

2. Intelligence Layer

This is where AI models operate.

Capabilities include:

- Natural language understanding (requirements parsing)

- Pattern recognition (defect trends)

- Predictive analytics (risk-based prioritization)

This layer powers decision-making across the testing lifecycle.

3. Agent Layer

The defining feature of Agentic AI in Testing.

Agents are responsible for:

- Planning test strategies

- Generating test cases

- Executing workflows

- Adapting to system changes

Each agent operates independently but collaborates within the ecosystem.

4. Orchestration Layer

This layer coordinates:

- Test execution pipelines

- Environment provisioning

- Tool integrations

It ensures that all agents work toward a unified goal.

5. Feedback & Learning Loop

The system continuously improves by:

- Learning from failures

- Updating test strategies

- Refining prioritization

Enterprise Architecture Visualization

Strategic Insight

This architecture mirrors modern data and AI platforms, where intelligence is embedded into operations rather than layered on top.

For organizations aligning testing with enterprise AI platforms, refer to:

https://www.techment.com/blogs/what-is-microsoft-fabric-comprehensive-overview/

The Future Role of QA Engineers: From Testers to Quality Architects

The Shift in Responsibilities

As Agentic AI in Testing becomes mainstream, the role of QA engineers will undergo a fundamental transformation.

Traditional responsibilities included:

- Writing test cases

- Executing scripts

- Reporting defects

These activities will increasingly be handled by AI.

The Rise of Quality Architects

QA professionals will evolve into Quality Architects, focusing on:

- Defining testing strategies

- Setting quality benchmarks

- Governing AI systems

- Validating AI decisions

New Skill Requirements

To thrive in an agentic AI-driven environment, QA engineers must develop:

1. Systems Thinking

Understanding how applications, data, and AI systems interact.

2. AI Literacy

Basic knowledge of machine learning models and decision-making processes.

3. Data Analysis Skills

Interpreting AI-generated insights and metrics.

4. Governance Expertise

Ensuring compliance, fairness, and reliability.

Human + AI Collaboration Model

Agentic AI does not replace QA engineers—it augments them.

The model becomes:

- AI handles execution and optimization

- Humans focus on strategy and oversight

Organizational Impact

Enterprises must:

- Redefine QA roles

- Upskill teams

- Align incentives with quality outcomes

Governance, Trust, and Risk Management in Agentic AI Testing

Why Governance Is Critical

Agentic AI introduces autonomy, which raises important questions:

- Can we trust AI decisions?

- How do we ensure compliance?

- What happens when AI makes mistakes?

Without governance, autonomous systems can become unpredictable and risky.

Key Governance Challenges

1. Trust in AI Decisions

Enterprises must ensure:

- Explainability of AI actions

- Transparency in decision-making

2. Data Privacy and Security

Agentic systems rely heavily on data.

Risks include:

- Exposure of sensitive data

- Compliance violations (GDPR, HIPAA)

3. Integration Complexity

Agentic AI must integrate with:

- CI/CD pipelines

- DevOps tools

- Legacy systems

4. Bias and Incomplete Coverage

AI models may:

- Miss critical scenarios

- Prioritize incorrectly

Governance Framework for Agentic AI

To mitigate risks, enterprises should implement:

Policy Layer

Defines rules, compliance requirements, and constraints.

Monitoring Layer

Tracks AI decisions and system behavior.

Audit Layer

Ensures traceability of actions and outcomes.

Governance Model Visualization

Strategic Takeaway

Agentic AI in Testing must be treated as a governed system, not just a productivity tool.

For enterprises building governance-first data ecosystems, refer to:

https://www.techment.com/blogs/data-governance-for-data-quality-future-proofing-enterprise-data/

Implementation Roadmap: How Enterprises Can Adopt Agentic AI in Testing

Phase 1: Foundation

Focus on:

- Data quality improvement

- Test data management

- Centralized test repositories

Phase 2: AI-Assisted Testing

Introduce:

- AI-based test generation

- Self-healing capabilities

- Intelligent test selection

Phase 3: Partial Orchestration

Enable:

- Automated test execution pipelines

- AI-driven prioritization

- Integrated analytics

Phase 4: Full Agentic Orchestration

Achieve:

- Autonomous test planning

- End-to-end lifecycle management

- Continuous learning systems

Implementation Journey Visualization

Critical Success Factors

- Strong data foundation

- Cross-functional collaboration

- Governance-first approach

- Incremental adoption strategy

Enterprise Insight

Organizations that attempt to jump directly to full autonomy often fail. A phased approach ensures scalability and trust.

For guidance on AI readiness and enterprise transformation, explore:

https://www.techment.com/blogs/ai-ready-enterprise-checklist-microsoft-fabric

How Techment Helps Enterprises Adopt Agentic AI in Testing

Techment enables enterprises to transition from traditional QA models to AI-driven, orchestrated testing ecosystems through a structured, outcome-focused approach.

Data Modernization for AI-Driven Testing

Agentic AI depends on high-quality, governed data.

Techment helps organizations:

- Build scalable data platforms

- Ensure data reliability and accessibility

- Enable AI-ready architectures

Explore: Data Quality for Enterprises

AI Readiness and Strategy

Techment works with enterprise leaders to:

- Define AI adoption roadmaps

- Align testing with business goals

- Identify high-impact use cases

Platform Implementation

Techment supports:

- AI and analytics platforms (including Microsoft ecosystems)

- Integration with DevOps and CI/CD pipelines

- Unified data and testing environments

Governance and Compliance

Techment ensures:

- Responsible AI implementation

- Data privacy and security compliance

- Transparent and auditable AI systems

End-to-End Transformation

From strategy to execution, Techment delivers:

- Roadmap design

- Implementation

- Optimization and scaling

Strategic Positioning

Techment acts as a trusted partner, helping enterprises not just adopt Agentic AI in Testing—but operationalize it for measurable business impact.

Conclusion

Agentic AI in Testing represents the next major evolution in software quality engineering.

By moving beyond script generation to full test orchestration, it fundamentally transforms how testing is planned, executed, and optimized. Enterprises can achieve faster releases, improved quality, and reduced operational overhead—while enabling QA teams to focus on strategic outcomes.

However, this transformation requires more than adopting new tools. It demands a shift in architecture, operating models, governance, and workforce capabilities.

The future of testing is not just automated—it is intelligent, autonomous, and orchestrated.

Organizations that embrace Agentic AI early will gain a significant competitive advantage in delivering high-quality digital experiences at scale.

Techment stands ready to help enterprises navigate this transformation—bridging strategy, technology, and execution to unlock the full potential of AI-driven testing.

FAQs

1. What is Agentic AI in Testing?

Agentic AI in Testing refers to autonomous AI systems that can plan, execute, analyze, and optimize the entire testing lifecycle without constant human intervention.

2. How is Agentic AI different from traditional test automation?

Traditional automation focuses on executing predefined scripts, while Agentic AI enables intelligent orchestration, decision-making, and continuous learning.

3. Is Agentic AI suitable for all enterprises?

It is most beneficial for enterprises with complex systems, large-scale testing needs, and mature data infrastructure.

4. What skills are required to adopt Agentic AI in QA?

Organizations need expertise in AI, data engineering, QA strategy, and governance.

5. What are the biggest risks of Agentic AI in Testing?

Key risks include lack of trust, data privacy concerns, integration complexity, and governance challenges

Related Read

- Ultimate Guide to Optimizing Spark Workloads in Microsoft Fabric for Data Engineers

- Microsoft Fabric Architecture: CTO’s Guide to Modern Analytics & AI

- Data Governance for Data Quality: Future-Proofing Enterprise Data

- Data Quality for AI in 2026: Enterprise Guide

- Microsoft Fabric vs Power BI: Understanding the Difference

- Microsoft Fabric vs Snowflake: Data Management Showdown

- AI-Ready Enterprise Checklist for Microsoft Fabric Microsoft Fabric vs Power BI: Understanding the Difference

- Microsoft Fabric vs Snowflake: Data Management Showdown

- AI-Ready Enterprise Checklist for Microsoft Fabric