Enterprise leaders are investing heavily in AI, yet many initiatives fail to deliver sustained value. The root cause is rarely the algorithm itself. In practice, most AI failures originate upstream—poor data quality, inconsistent governance, and limited visibility into how data changes affect model behavior. Building Trustworthy AI with Microsoft Fabric requires a shift in mindset: AI reliability is a data engineering problem before it is a modeling problem.

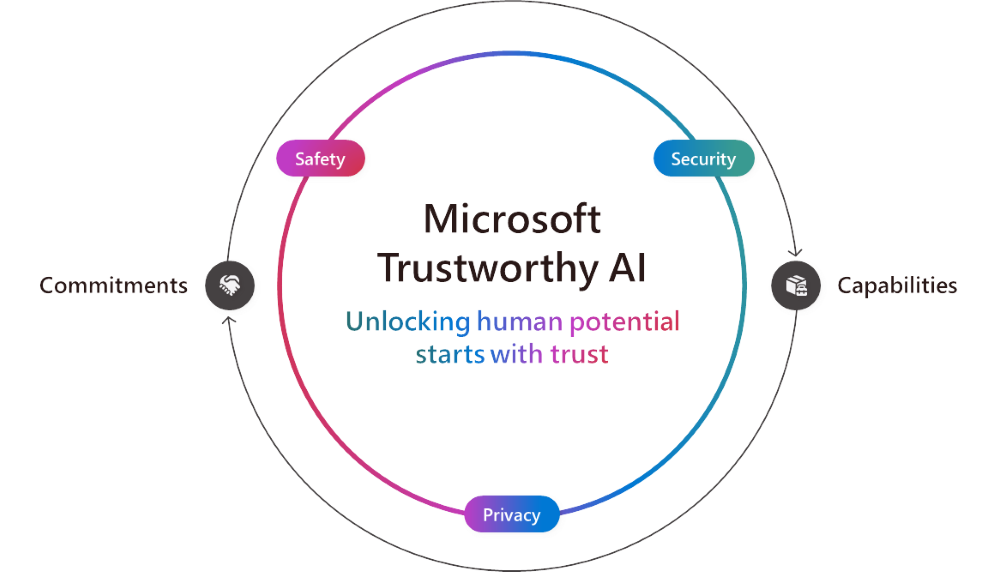

As AI systems become embedded into critical decision-making—pricing, risk scoring, forecasting, customer engagement—the tolerance for unpredictable or opaque outcomes approaches zero. Executives need confidence that AI outputs are explainable, compliant, and repeatable. That confidence can only be achieved when data pipelines are designed with trust as a first-class requirement.

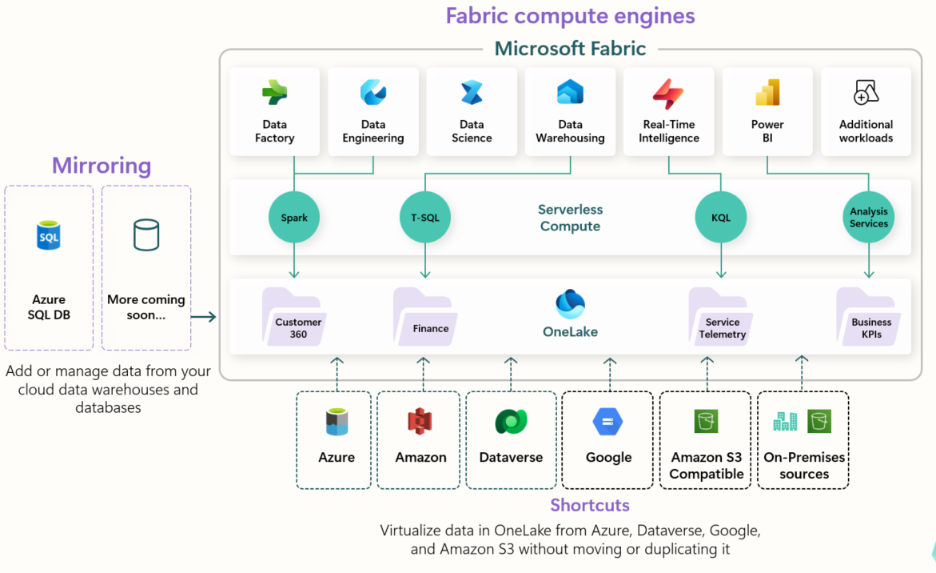

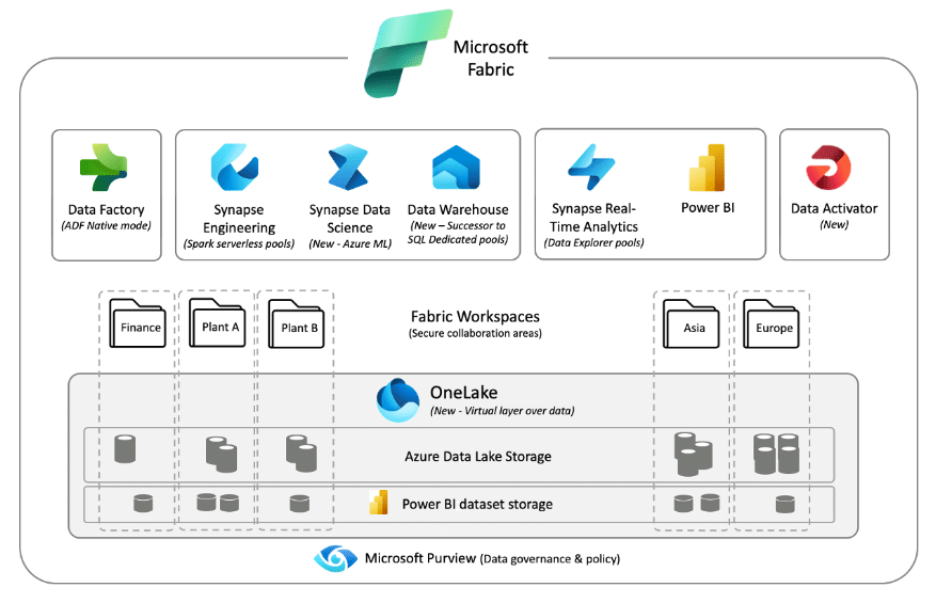

Microsoft Fabric offers a unified analytics and AI platform where data quality validation, governance enforcement, and observability are not afterthoughts. Instead, they are embedded directly into how data is ingested, stored, shared, and consumed by AI workloads. This article explores how enterprises can use Fabric to operationalize trustworthy AI—moving beyond theory into practical, end-to-end execution.

Related Insights: Our Enterprise AI Strategy in 2026: A Practical Guide for CIOs and Data Leaders help you shape AI direction and deliver organizational impact.

TL;DR Summary

- Most AI failures stem from data quality, governance gaps, and poor observability—not models

- Trustworthy AI requires engineering discipline across ingestion, storage, and consumption

- Microsoft Fabric embeds data quality, governance, and observability into AI pipelines

- Fabric Lakehouse and Delta tables enable versioning, traceability, and reliability

- Purview-integrated governance ensures AI uses only approved, compliant datasets

- Observability closes the loop by linking data changes to AI behavior

Why Trustworthy AI Is Fundamentally a Data Problem

Why Trustworthy AI Is Fundamentally a Data Problem – The Hidden Failure Modes of Enterprise AI

Building Trustworthy AI with Microsoft Fabric starts by recognizing that AI reliability is determined far upstream—long before models are trained or deployed—within the data pipelines themselves. When AI initiatives underperform, postmortems often focus on model selection, prompt tuning, or algorithmic bias. While these factors matter, they rarely explain systemic failures at scale. In enterprise environments, AI models are downstream consumers of complex, constantly changing data ecosystems. If those ecosystems are unreliable, no amount of model tuning can compensate.

According to the McKinsey Global Institute’s State of AI report, organizations that fail to operationalize data quality, governance, and transparency struggle to move AI initiatives beyond experimentation, with data-related issues cited as a leading barrier to scaled AI impact. This reinforces why building trustworthy AI with Microsoft Fabric must start with strong data foundations rather than model-centric optimization alone.

Common failure modes include inconsistent schemas across sources, silent data drift, incomplete ingestion, and untracked transformations. These issues propagate invisibly until they surface as incorrect predictions, hallucinated outputs, or regulatory exposure. At that point, organizations scramble reactively—often without the lineage or telemetry needed to diagnose the root cause.

This is why Building Trustworthy AI with Microsoft Fabric starts with treating data pipelines as production-grade systems. Fabric’s unified architecture eliminates many of the integration gaps that traditionally hide data quality issues until it is too late.

Trust as an Enterprise Requirement, Not a Model Feature

Trustworthy AI is often framed as an ethical or compliance concern. While governance and ethics are critical, trust is ultimately an operational attribute. Can leaders rely on AI outputs to be consistent? Can engineers trace decisions back to source data? Can compliance teams prove that sensitive data was handled appropriately?

Without integrated data quality checks, governance controls, and observability, these questions cannot be answered confidently. Fabric addresses this by collapsing analytics, data engineering, governance, and AI workloads into a single platform—reducing fragmentation and increasing accountability.

Related Insights: This perspective aligns with Techment’s view in Fabric AI Readiness: How to Prepare Your Data for Scalable AI Adoption, where AI success is framed as a maturity journey rooted in data foundations rather than experimentation.

Data Quality as the First Pillar of Trustworthy AI

Why AI Pipelines Amplify Data Quality Issues

For enterprises building trustworthy AI with Microsoft Fabric, data quality is not a hygiene task—it is a structural requirement for reliable AI outcomes. Traditional analytics can often tolerate minor data imperfections. Dashboards may lag, aggregates may smooth inconsistencies, and human judgment can fill gaps. AI systems, however, amplify data quality issues. Small errors in training or inference data can cascade into biased predictions, unstable outputs, or degraded performance over time.

AI models assume that incoming data conforms to learned patterns. When schema changes, missing values, or delayed ingestion occur without detection, models continue operating on faulty assumptions. This is why building trustworthy AI with Microsoft Fabric requires enforcing data quality checks directly at ingestion rather than relying on downstream remediation.

This leads to silent failure—arguably the most dangerous outcome in enterprise AI.

Building Trustworthy AI with Microsoft Fabric means preventing these issues at the earliest possible stage: ingestion.

Fabric Ingestion with Built-In Validation

Microsoft Fabric allows enterprises to embed data quality validation directly into ingestion pipelines. Using Dataflows Gen2, Spark notebooks, or pipelines, teams can enforce checks for schema consistency, freshness, completeness, and volume anomalies before data ever reaches AI consumers.

Rather than relying on downstream remediation, Fabric enables a “shift-left” approach. Data that fails validation can be quarantined, flagged, or automatically remediated. This reduces the risk of corrupted datasets being used for model training or inference.

Related Insights: This approach aligns with Techment’s perspective in Data Quality for AI in 2026: The Ultimate Blueprint for Accuracy, Trust & Scalable Enterprise Adoption, which emphasizes proactive quality engineering over reactive monitoring.

Standardizing Quality Across Domains

Large enterprises rarely suffer from a lack of data. The challenge is inconsistency across domains, business units, and geographies. Fabric’s unified data experience allows organizations to define standardized quality rules that apply across pipelines while still accommodating domain-specific logic.

By centralizing quality metrics and thresholds, enterprises create a shared language of data trust. AI teams no longer need to guess whether a dataset is “good enough”—quality becomes measurable, auditable, and enforceable.

Lakehouse Foundations: Reliable Data for AI at Scale

Why Storage Architecture Matters for Trust

When building trustworthy AI with Microsoft Fabric, the Lakehouse architecture becomes the backbone for consistency, versioning, and AI reproducibility. Data quality checks alone are insufficient if the underlying storage layer cannot preserve history, track changes, or enforce contracts. AI systems are particularly sensitive to untracked changes because retraining, rollback, and root-cause analysis all depend on historical context.

Without Delta-based Lakehouse foundations, building trustworthy AI with Microsoft Fabric at enterprise scale becomes operationally fragile. Microsoft Fabric’s Lakehouse architecture, built on Delta tables, provides the structural foundation needed for trustworthy AI. It combines the flexibility of data lakes with the reliability traditionally associated with data warehouses.

Delta Tables and Data Contracts

Delta tables enable schema enforcement, ACID transactions, and time travel. These capabilities are critical for AI workloads. Schema enforcement prevents breaking changes from propagating silently. Versioning allows teams to reproduce past model behavior by referencing the exact data snapshot used at a given point in time.

Data contracts further strengthen trust by formalizing expectations between data producers and consumers. When contracts are violated, pipelines can fail fast—preventing unreliable data from contaminating AI workflows.

Related Insights: Explore what defines an AI-First Enterprise, how Copilot and intelligent business apps transform workflows, and how Techment helps organizations chart their roadmap from pilot to scale.

Supporting Both Training and Inference

AI systems operate across two distinct modes: training and inference. Fabric’s Lakehouse supports both without duplicating data or tooling. Historical, curated datasets can be used for training, while fresh, validated data feeds inference workloads.

This unified approach reduces complexity and ensures consistency. Models trained on governed, high-quality data are less likely to encounter surprises when deployed into production.

Related Insights: Enterprise AI Strategy in 2026: A Proven Roadmap for Future-Ready Enterprises

Governance as a Trust Multiplier in AI Systems

Why Ungoverned AI Is an Enterprise Risk

Building trustworthy AI with Microsoft Fabric depends on governance that is enforced by platform design—not policy documents alone. As AI adoption accelerates, governance gaps become existential risks. Models trained on sensitive or unapproved data expose organizations to regulatory penalties, reputational damage, and loss of customer trust. In decentralized environments, it is often unclear which datasets are approved for AI use and which are not. This embedded governance model is a defining advantage when building trustworthy AI with Microsoft Fabric in regulated industries.

Trustworthy AI requires more than access control. It requires context—understanding what data represents, where it originated, how it can be used, and who is accountable.

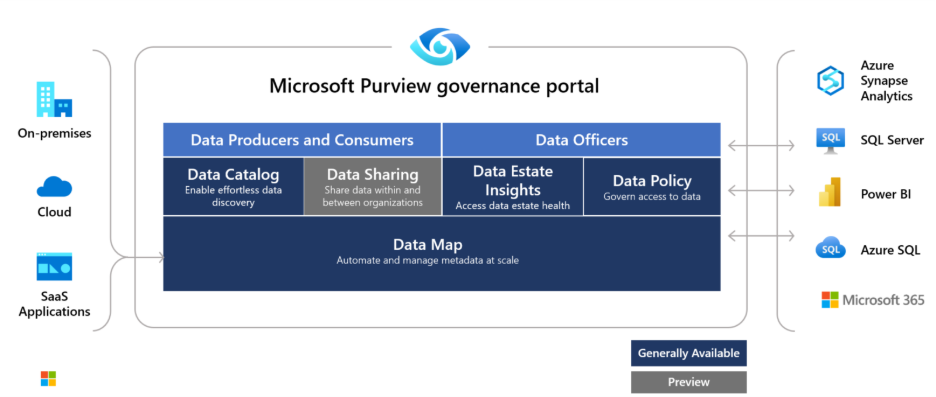

Fabric and Purview: Embedded Governance

By integrating Microsoft Fabric with Microsoft Purview, enterprises can embed governance directly into AI pipelines. Data assets are automatically cataloged, classified, and labeled as they move through Fabric.

Sensitive data can be identified using built-in classifiers, while custom business classifications add domain context. Certification workflows allow data stewards to approve datasets for specific use cases, including AI training and inference.

Related Insights: This ensures that AI workloads are restricted to governed, approved data—reducing risk without slowing innovation. Techment explores this deeply in Data Governance for Data Quality: Future-Proofing Enterprise Data.

Enforcing Policy at Consumption Time

Governance is only effective if it is enforced. Fabric enables policy-based access controls that apply consistently across analytics, reporting, and AI workloads. AI teams cannot bypass governance by copying data into shadow environments.

This consumption-time enforcement is critical. It ensures that even as new AI use cases emerge, they inherit existing governance policies automatically—preserving trust at scale.

Observability: Closing the Trust Loop in Enterprise AI

Why Observability Is the Missing Link in Trustworthy AI

Building trustworthy AI with Microsoft Fabric requires observability that connects data behavior directly to AI outcomes across the lifecycle. Even with strong data quality controls and governance frameworks, enterprises often struggle to answer one critical question: Why did the AI behave this way? Without observability, AI systems remain opaque. When outputs deviate from expectations, teams are left guessing whether the root cause lies in data drift, upstream transformations, pipeline failures, or legitimate changes in business conditions.

Observability is not traditional monitoring. It is the ability to continuously understand the health, behavior, and impact of data as it flows through AI pipelines. For enterprises serious about Building Trustworthy AI with Microsoft Fabric, observability is what transforms static controls into a living, adaptive system of trust.

Without observability, building trustworthy AI with Microsoft Fabric becomes an exercise in reactive troubleshooting rather than controlled engineering. Fabric embeds observability across the analytics lifecycle, allowing organizations to move from reactive incident response to proactive insight. This capability becomes essential as AI systems scale across multiple domains, geographies, and regulatory environments.

From Data Health to AI Behavior

Observability in Fabric operates across multiple layers. At the data layer, teams can monitor schema drift, freshness, volume anomalies, and quality scores over time. These metrics provide early signals that something is changing—often before AI outputs are affected.

At the semantic and consumption layers, observability connects data changes to downstream analytics and AI workloads. When an AI system produces unexpected results, engineers can trace the lineage backward to identify which dataset, transformation, or ingestion event triggered the change.

This end-to-end visibility is what allows organizations to treat AI incidents as diagnosable engineering events rather than mysterious black-box failures. Techment emphasizes this closed-loop approach in Data Quality For AI, where observability is positioned as a prerequisite for operational AI at scale.

Detecting and Managing Data Drift in AI Pipelines

Drift as an Inevitable Reality

In practice, building trustworthy AI with Microsoft Fabric means assuming data drift will occur and engineering for early detection and response. In dynamic business environments, data drift is inevitable. Customer behavior changes, products evolve, regulations shift, and external signals fluctuate. AI systems trained on historical data must adapt—or they risk becoming obsolete or misleading.

Drift management is therefore not optional when building trustworthy AI with Microsoft Fabric at scale. The challenge is not preventing drift, but detecting and managing it. Without observability, drift often goes unnoticed until performance degradation becomes severe. At that point, trust in AI erodes rapidly among business stakeholders.

Fabric enables continuous drift detection by tracking statistical changes, schema evolution, and distribution shifts across datasets. These signals can be correlated with AI performance metrics, allowing teams to understand not just that drift occurred, but why it matters.

Linking Drift to Business Impact

One of the most powerful aspects of observability in Fabric is the ability to contextualize technical signals in business terms. A change in data distribution is not inherently bad. The question is whether it affects decision quality, compliance, or customer outcomes.

By linking data observability metrics with downstream AI usage—reports, APIs, decision systems—enterprises can prioritize responses based on impact. Minor drift in a low-risk model may warrant monitoring, while similar drift in a regulatory or financial model may require immediate intervention.

This risk-based approach aligns with executive expectations. Trustworthy AI is not about eliminating all uncertainty; it is about managing uncertainty intelligently.

Governance, Observability, and Accountability

Making Ownership Explicit

Building trustworthy AI with Microsoft Fabric demands clear accountability, supported by transparent lineage, ownership, and usage visibility.

Trust breaks down when accountability is unclear. In many organizations, data ownership, model ownership, and business ownership are fragmented. When something goes wrong, responsibility becomes diffuse.

Fabric’s integration of governance and observability helps clarify ownership by making data lineage and usage transparent. When an AI system relies on a dataset, it is clear who owns that dataset, how it is governed, and how it has changed over time. This accountability model is critical to building trustworthy AI with Microsoft Fabric in complex enterprise operating environments.

Related Insights: This transparency supports stronger operating models. Data stewards, platform teams, and AI engineers can collaborate using shared evidence rather than assumptions. Techment outlines these operating model shifts in What a Microsoft Data and AI Partner Brings to Your Data Strategy.

Auditability and Regulatory Confidence

Regulatory scrutiny of AI is increasing globally. Enterprises must be able to demonstrate not only how models work, but how data was sourced, validated, and governed. Observability data becomes a critical audit artifact.

Fabric’s lineage, versioning, and monitoring capabilities allow organizations to reconstruct the state of data at any point in time. This supports internal audits, regulatory inquiries, and external assurance without disrupting operations.

In this context, Building Trustworthy AI with Microsoft Fabric is not just a technical initiative—it is a compliance and risk management strategy.

Operating Models for Trustworthy AI at Scale

Moving Beyond Project-Based AI

Building trustworthy AI with Microsoft Fabric requires operating models that treat AI systems as long-lived products rather than one-off projects. Many organizations still approach AI as a series of projects. Teams build models, deploy them, and move on. This approach does not scale. Trustworthy AI requires a product mindset, where AI systems are continuously operated, observed, and improved.

Fabric supports this shift by providing a unified platform where data engineering, analytics, and AI teams work against shared assets and metrics. This reduces handoffs and creates a single source of operational truth. Platform-led alignment is what enables building trustworthy AI with Microsoft Fabric across domains and business units.

Related Insights: Techment reinforces this perspective in Enterprise AI Strategy in 2026, where AI success is framed as an organizational capability rather than a technology deployment.

Aligning Roles and Responsibilities

In mature operating models, roles are clearly defined:

- Data producers are accountable for data quality at the source

- Platform teams provide standardized pipelines, governance, and observability

- AI teams focus on modeling and decision logic, trusting the data foundation

- Business owners define outcomes and risk tolerance

Fabric enables this alignment by making expectations explicit and measurable. Trust becomes a shared responsibility, reinforced by platform capabilities rather than informal agreements.

Trade-offs and Real-World Considerations

Balancing Control and Agility

Enterprises building trustworthy AI with Microsoft Fabric must balance governance rigor with the agility required for innovation. A common concern is that strong governance and quality controls may slow innovation. In practice, the opposite is often true. When trust is embedded into the platform, teams move faster because they spend less time validating assumptions manually.

However, enterprises must strike a balance. Overly rigid rules can create bottlenecks, while overly permissive environments invite risk. Fabric’s flexibility allows organizations to apply different levels of control based on data sensitivity and use case criticality.

Cost and Complexity Management

Observability and governance introduce additional telemetry and metadata. Without discipline, this can increase cost and complexity. Successful organizations treat these signals as strategic assets, not exhaust.

Related Insights: By focusing on metrics that matter—those linked to AI performance and business outcomes—enterprises avoid noise and maximize value. Techment’s experience, documented in Fabric AI Readiness: How to Prepare Your Data for Scalable AI Adoption, shows that intentional design is key.

How Techment Helps Enterprises Build Trustworthy AI with Microsoft Fabric

Building trustworthy AI with Microsoft Fabric requires more than tools—it demands an integrated strategy across data quality, governance, observability, and operating models, where Techment acts as a strategic partner. Trustworthy AI is not achieved through isolated tooling decisions. It requires an integrated strategy spanning data architecture, governance, operating models, and execution. This is where Techment acts as a strategic partner rather than a systems integrator.

Techment helps enterprises design and implement Microsoft Fabric environments where data quality, governance, and observability are embedded by design. From modernizing legacy data platforms to enabling AI-ready Lakehouse architectures, Techment ensures that AI initiatives rest on reliable foundations.

Our approach includes defining enterprise-grade data quality frameworks, integrating governance through Purview-aligned operating models, and implementing observability practices that link data health directly to AI behavior. This enables organizations to scale AI confidently while meeting regulatory, ethical, and operational expectations.

Through end-to-end roadmaps—strategy, implementation, and optimization—Techment positions Fabric not just as a platform, but as a trust engine for enterprise AI.

Related Insights: What a Microsoft Data and AI Partner Brings to Your Data Strategy

Conclusion

Building trustworthy AI with Microsoft Fabric ultimately comes down to engineering discipline across data quality, governance, and observability—not model sophistication alone. AI systems earn trust—or lose it—based on the reliability of the data that feeds them. Models may capture headlines, but data quality, governance, and observability determine whether AI delivers sustainable enterprise value. Building Trustworthy AI with Microsoft Fabric is about elevating these foundations from afterthoughts to strategic priorities.

By validating data at ingestion, enforcing governance through integrated policies, and closing the loop with continuous observability, Fabric enables organizations to move from experimental AI to dependable, enterprise-grade intelligence. Trust becomes measurable, auditable, and scalable.

For leaders navigating the next phase of AI adoption, the message is clear: trustworthy AI is not a feature—it is an engineering discipline. With the right platform and partner, it becomes a competitive advantage rather than a constraint.

Related Insights: Fabric AI Readiness: How to Prepare Your Data for Scalable AI Adoption

Frequently Asked Questions

Is Microsoft Fabric sufficient for building trustworthy AI on its own?

Fabric provides the foundational capabilities, but success depends on how organizations design processes, governance, and operating models around it.

How does governance impact AI innovation speed?

Well-designed governance accelerates innovation by reducing uncertainty and rework, allowing teams to focus on value rather than validation.

Can observability really explain AI behavior?

Observability does not replace model explainability, but it provides essential context by linking outputs to data changes and pipeline events.

What skills are required to operationalize trustworthy AI?

Strong data engineering, platform governance, and cross-functional collaboration skills are more critical than advanced modeling expertise.

How long does it take to see value from these practices?

Enterprises typically see improved reliability and reduced incidents within months, with strategic benefits compounding over time.