Generative AI is rapidly transforming enterprise technology strategy. From intelligent copilots and predictive analytics to automated content generation and autonomous decision systems, AI has moved from experimentation to core business capability.

However, deploying AI at enterprise scale introduces a new level of complexity. AI models require vast computing power, high-quality data pipelines, integrated governance, and robust security frameworks. Traditional on-premises infrastructure rarely provides the elasticity or global reach required for modern AI workloads.

This is why many organizations are shifting toward secure scalable AI with Microsoft Azure. Azure offers enterprise-grade cloud infrastructure designed specifically for AI development, deployment, and governance.

According to research, 75% of surveyed organizations reported that migrating to Azure was necessary or significantly reduced the barriers to enabling AI/ML at their organization. Azure’s scalable infrastructure, integrated security capabilities, and unified data platforms allow organizations to operationalize AI while maintaining strict compliance and governance.

For CTOs, data leaders, and cloud architects, the challenge is not simply adopting AI—it is building secure, scalable AI systems that deliver measurable business value. Explore our AI services.

This guide explores:

- Why enterprises are moving AI workloads to the cloud

- The architectural pillars of Azure-based AI platforms

- Security and governance models for enterprise AI

- Data platforms powering scalable AI innovation

- Strategic frameworks for deploying generative AI on Azure

TL;DR (Executive Snapshot)

- Generative AI adoption is accelerating, but enterprise deployment requires secure, scalable infrastructure.

- Microsoft Azure provides the cloud foundation for secure scalable AI with Microsoft Azure, combining infrastructure, governance, and unified data platforms.

- Enterprises moving AI workloads to Azure report improved performance, scalability, and innovation velocity.

- Azure integrates AI services, security frameworks, and data platforms like Microsoft Fabric and Azure Databricks.

- Successful enterprise AI strategies require governance, unified data, responsible AI controls, and scalable infrastructure.

- Organizations adopting Azure-based AI architectures are 2× more confident in deploying production AI workloads.

The Enterprise Imperative for Secure Scalable AI with Microsoft Azure

Enterprise AI adoption has accelerated dramatically over the past two years. Organizations across industries—from financial services to healthcare and manufacturing—are embedding AI into core operations.

Yet scaling AI across the enterprise remains difficult.

Many organizations initially experimented with AI using isolated tools, local GPU clusters, or departmental platforms. While these approaches can support pilot projects, they often struggle to scale across global operations.

Enterprises today require:

- Elastic compute for model training and inference

- Global infrastructure to serve AI applications

- Integrated security and governance

- Unified data platforms

- Continuous model lifecycle management

These requirements are difficult to achieve in fragmented on-premises environments.

Gartner predicts strong cloud adoption for GenAI workloads due to scale requirements.

This supports the idea that AI workloads will move to the cloud, but no percentage or 2027 figure is given.

Cloud platforms like Microsoft Azure provide the architectural foundation needed to support this shift.

Organizations exploring secure scalable AI with Microsoft Azure gain access to:

- High-performance AI infrastructure

- Pre-built AI and machine learning services

- Integrated security frameworks

- Global cloud regions

- Unified analytics platforms

For enterprise leaders building modern AI ecosystems, Azure effectively becomes the operating system for enterprise AI.

Why On-Premises Infrastructure Struggles to Scale AI

Many enterprises initially attempt to run generative AI models on existing infrastructure. While this approach may work early experimentation, it often becomes unsustainable as workloads grow.

AI systems demand resources far beyond traditional application environments.

Training modern models requires:

- High-performance GPUs

- Distributed computing

- Large-scale data pipelines

- High-speed storage

- Massive network bandwidth

Maintaining this infrastructure internally is expensive and complex.

On-premises environments also face additional challenges:

Infrastructure bottlenecks- Legacy systems lack the elasticity required for large-scale AI workloads.

Fragmented data environments- AI models require unified data access across enterprise systems.

Operational complexity- Managing AI pipelines, infrastructure, and security internally requires specialized expertise.

Scaling limitations- Hardware upgrades often involve long procurement cycles.

As a result, many organizations encounter significant delays when attempting to operationalize AI at scale.

These limitations are why enterprises increasingly move toward secure scalable AI with Microsoft Azure, where infrastructure, AI services, and security frameworks are integrated into a unified cloud platform.

Organizations modernizing their AI ecosystems often begin with a broader enterprise data strategy, ensuring that data pipelines, governance models, and analytics frameworks support AI adoption at scale.

For a deeper perspective on building such strategies, see Techment’s guide: Enterprise AI Strategy in 2026 that highlight how aligning AI adoption with enterprise architecture ensures sustainable innovation.

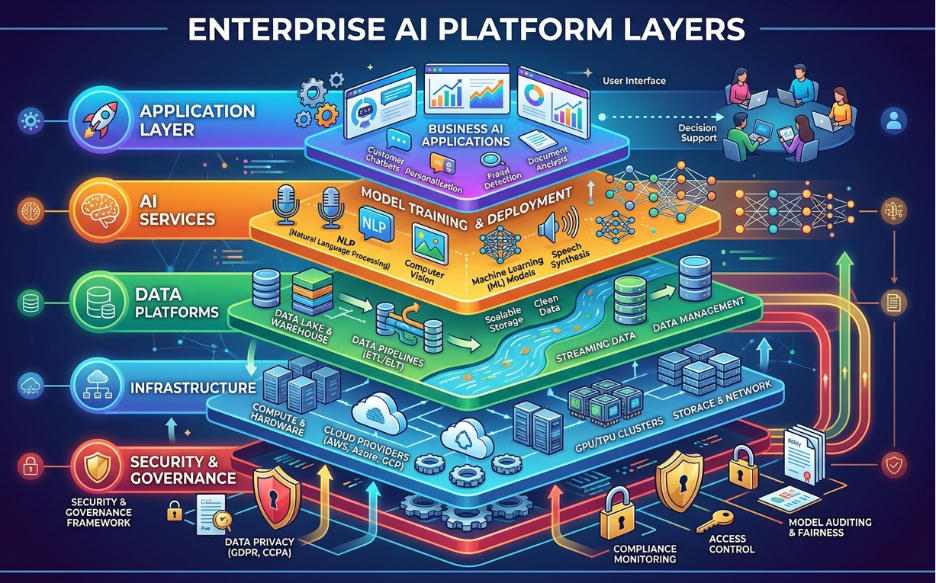

The Cloud Architecture Behind Enterprise AI Innovation

Deploying AI successfully requires more than compute resources. Enterprises must design architecture capable of supporting the entire AI lifecycle.

This lifecycle includes:

- Data ingestion

- Data transformation

- Model development

- Model training

- Deployment

- Monitoring

- Governance

Azure supports each of these layers through an integrated cloud ecosystem.

Core Components of Azure’s Enterprise AI Platform

Organizations implementing secure scalable AI with Microsoft Azure typically rely on several key architectural layers.

AI and Machine Learning Services

Azure offers a comprehensive set of AI services enabling organizations to build and deploy machine learning models efficiently.

Key services include:

- Azure Machine Learning

- Azure OpenAI Service

- Cognitive Services

- AI Studio

These services allow enterprises to develop AI applications without managing complex infrastructure manually.

Data Platforms

AI performance depends heavily on data quality and accessibility.

Azure integrates several enterprise data platforms including:

- Microsoft Fabric

- Azure Synapse Analytics

- Azure Databricks

These platforms allow organizations to unify structured and unstructured data for analytics and AI workloads.

Organizations building enterprise AI platforms often combine these technologies to create unified data ecosystems.

Techment explores this architecture in detail in Microsoft Fabric Architecture: CTO’s Guide to Modern Analytics & AI

Cloud Infrastructure

Azure provides global infrastructure optimized for AI workloads.

Key capabilities include:

- GPU clusters

- High-performance storage

- Low-latency networking

- Global availability zones

This infrastructure enables enterprises to deploy AI applications globally without managing physical hardware.

Enterprise Security Frameworks for AI on Azure

Security remains one of the most critical considerations when deploying generative AI.

AI systems process sensitive data, generate new information, and interact directly with users. Without proper controls, these systems can introduce significant risks.

Common enterprise AI risks include:

- Data leakage

- Model manipulation

- Unauthorized AI usage

- Compliance violations

- Intellectual property exposure

For this reason, organizations building secure scalable AI with Microsoft Azure must integrate security frameworks throughout the AI lifecycle.

Azure’s Multi-Layered AI Security Model

Azure protects AI systems using multiple security layers.

Identity and Access Management

Identity systems control who can access AI services and data.

Azure integrates identity management through:

- Microsoft Entra ID

- Role-based access control

- Multi-factor authentication

These controls help prevent unauthorized access to AI systems.

Data Protection

Sensitive data used by AI systems must be protected both in transit and at rest.

Azure provides:

- Azure Key Vault

- Encryption services

- Secure data storage

These tools ensure enterprise data remains protected throughout AI workflows.

Threat Detection

AI environments must be monitored continuously for potential threats.

Azure integrates several security services including:

- Microsoft Defender for Cloud

- Microsoft Sentinel

These tools provide real-time monitoring and threat detection across AI infrastructure.

Compliance and Governance

Enterprises must comply with industry regulations such as GDPR, HIPAA, and financial regulations.

Azure supports compliance through built-in frameworks and certifications.

Organizations implementing strong governance models can reduce AI risks while enabling innovation.

Techment’s article Why Microsoft Fabric AI Solutions Are Changing the Way Enterprises Build Intelligence shows how Fabric enable trusted analytics and AI systems.

The Data Foundation for Enterprise AI

AI models are only as powerful as the data that powers them.

Many organizations discover that their biggest obstacle to AI adoption is not infrastructure—it is data readiness.

Enterprise data environments are often fragmented across:

- ERP systems

- CRM platforms

- operational databases

- data warehouses

- unstructured repositories

This fragmentation makes it difficult to deliver reliable data to AI models.

Organizations pursuing secure scalable AI with Microsoft Azure must establish a unified data architecture.

Microsoft Fabric and the Unified Data Platform

Microsoft Fabric has emerged as a critical component of modern AI architectures.

Fabric integrates multiple data capabilities into a single platform, including:

- data engineering

- data warehousing

- real-time analytics

- data science

- business intelligence

By consolidating these capabilities, Fabric enables organizations to manage their entire data lifecycle in one environment.

This unified approach offers several advantages:

- Improved data accessibility

- Teams can access data across departments without complex integrations.

- Stronger governance

- Centralized policies ensure consistent data quality and compliance.

- Better AI performance

- Unified data improves model accuracy and reliability.

Organizations implementing Fabric often combine it with Azure AI services to create powerful enterprise AI platforms.

Traditional Data Architecture vs Unified Data Fabric

| Feature | Traditional Data Architecture | Unified Data Fabric |

| Data accessibility | Data is silos, making it difficult to access and integrate data from different sources. This can lead to data quality issues and make it difficult to get a complete view of the business. | Data is integrated and made accessible from a single point of entry. This makes it easier to access and integrate data from different sources, leading to better data quality and a more complete view of the business. |

| Governance | Governance is often ad-hoc and siloed, making it difficult to ensure that data is accurate, consistent, and secure. This can lead to compliance issues and make it difficult to trust the data. | Governance is integrated and automated, making it easier to ensure that data is accurate, consistent, and secure. This leads to better compliance and makes it easier to trust the data. |

| AI readiness | Data is often not AI-ready, meaning that it is not formatted correctly or is not accessible in a way that AI models can use. This can make it difficult to deploy AI models and can lead to inaccurate results. | Data is AI-ready, meaning that it is formatted correctly and is accessible in a way that AI models can use. This makes it easier to deploy AI models and can lead to more accurate results. |

| Operational complexity | Operational complexity is high, as there are many different systems to manage and maintain. This can lead to high costs and make it difficult to scale the architecture. | Operational complexity is lower, as there is a single point of entry for managing and maintaining the architecture. This can lead to lower costs and make it easier to scale the architecture. |

| Scalability | Scalability is often difficult, as it can be hard to scale the different systems that make up the architecture. This can lead to performance issues and make it difficult to meet the growing needs of the business. | Scalability is easier, as the architecture is designed to be scalable. This makes it easier to meet the growing needs of the business without sacrificing performance. |

Techment’s detailed analysis of this architecture can be found in: What Is Microsoft Fabric? A Comprehensive Overview for Enterprise Leaders

Faster AI Innovation Through Cloud Infrastructure

Beyond infrastructure and security, Azure enables organizations to innovate faster with AI.

Traditional enterprise infrastructure requires extensive configuration before new workloads can be deployed. This slows experimentation and delays time-to-value.

Cloud environments remove these barriers.

Organizations building secure scalable AI with Microsoft Azure gain access to flexible environments where teams can experiment, iterate, and deploy AI solutions rapidly.

Azure supports innovation through:

- on-demand compute resources

- integrated development environments

- automated deployment pipelines

- AI model lifecycle management

These capabilities allow organizations to focus on developing AI solutions rather than maintaining infrastructure.

According to industry research, organizations deploying AI in the cloud report twice the confidence in their ability to build and refine AI models compared with those relying on on-premises systems.

This shift enables enterprises to transition from isolated AI experiments to production-grade AI platforms that deliver measurable business value.

For example, enterprises are now building AI-powered applications such as:

- intelligent copilots for employees

- automated document processing

- predictive maintenance systems

- conversational AI assistants

Techment’s guide on Conversational AI on Microsoft Azure: Building Intelligent Enterprise Assistants provides deeper insight into how enterprises deploy these applications at scale.

7 Enterprise Strategies for Deploying Secure Scalable AI with Microsoft Azure

While cloud infrastructure is essential, enterprises cannot simply migrate workloads and expect AI success. Successful AI adoption requires clear architectural strategies, governance frameworks, and operational models.

Organizations implementing secure scalable AI with Microsoft Azure typically follow a structured strategy that aligns infrastructure, data platforms, and governance with business objectives.

Below are seven proven strategies enterprise leaders are adopting to deploy generative AI successfully.

1. Establish a Cloud-Native AI Infrastructure Foundation

AI workloads are fundamentally different from traditional enterprise applications. Training large language models, deploying inference pipelines, and processing massive datasets require elastic computing infrastructure.

Azure enables organizations to build this foundation using cloud-native services designed specifically for AI.

Key infrastructure components include:

- Azure GPU clusters for model training

- high-performance storage for large datasets

- scalable compute environments for inference workloads

- distributed machine learning pipelines

These capabilities allow enterprises to scale AI workloads dynamically without managing physical infrastructure.

From a strategic perspective, cloud-native infrastructure enables organizations to move from isolated AI experiments to enterprise-wide AI platforms.

This shift allows AI systems to support global operations, customer experiences, and internal productivity initiatives.

Enterprises modernizing their analytics platforms often combine cloud infrastructure with unified data architectures. Techment explores this transition in detail in Microsoft Fabric vs Traditional Data Warehousing: What Leaders Need to Know.

2. Build a Unified Enterprise Data Foundation

AI systems rely on vast quantities of high-quality data.

However, most enterprises struggle with fragmented data environments distributed across multiple platforms and departments.

Without a unified data foundation, AI initiatives often suffer from:

- inconsistent data quality

- limited data accessibility

- compliance challenges

- poor model accuracy

Organizations pursuing secure scalable AI with Microsoft Azure increasingly adopt data fabric architectures that unify analytics and operational data.

Platforms such as Microsoft Fabric, Azure Synapse Analytics, and Azure Databricks enable organizations to create centralized data environments where analytics, machine learning, and governance coexist.

This architecture enables enterprises to:

- centralize data pipelines

- automate data transformation

- enforce governance policies

- support real-time analytics

A unified data platform dramatically improves AI readiness and operational scalability.

3. Implement Enterprise AI Governance and Responsible AI Frameworks

As generative AI adoption accelerates, governance has become one of the most critical components of enterprise AI strategy.

Organizations deploying AI must ensure their systems are:

- secure

- ethical

- compliant

- transparent

Without governance frameworks, AI deployments can expose organizations to significant regulatory and operational risks.

Key enterprise AI governance components include:

- Model lifecycle governance

- Ensuring models are versioned, monitored, and updated responsibly.

- Data governance

- Protecting sensitive data and enforcing compliance policies.

- Responsible AI policies

- Ensuring models are transparent, explainable, and unbiased.

Azure provides built-in tools supporting these governance frameworks, including:

- Azure AI governance tools

- compliance certifications

- audit logging

- policy enforcement mechanisms

Organizations building mature governance frameworks often integrate data quality and governance initiatives as part of their broader AI strategy.

Techment’s perspective on this topic is explored in Data Governance for Data Quality: Future-Proofing Enterprise Data.

4. Secure AI Workloads Across the Entire Lifecycle

Security must be embedded throughout the AI lifecycle—from development and training to deployment and monitoring.

Enterprises implementing secure scalable AI with Microsoft Azure benefit from Azure’s integrated security ecosystem.

Key security components include:

- Microsoft Defender for Cloud- Provides continuous security monitoring across AI infrastructure.

- Microsoft Sentinel- Enables enterprise-scale security analytics and threat detection.

- Azure Key Vault- Protects sensitive secrets, keys, and credentials used in AI systems.

- Role-Based Access Control- Ensures only authorized users can access AI services.

Together, these tools create a multi-layered security model protecting enterprise AI environments.

Security strategies should also address emerging risks associated with generative AI such as:

- prompt injection attacks

- data leakage from training sets

- unauthorized AI usage

Organizations addressing these risks effectively are able to deploy AI systems confidently while maintaining strong compliance and security controls.

5. Enable AI Developer Productivity and Collaboration

AI innovation depends heavily on collaboration between multiple technical teams.

Enterprise AI initiatives typically involve:

- data engineers

- data scientists

- machine learning engineers

- software developers

- DevOps teams

Azure supports collaboration through integrated development environments and machine learning platforms.

Azure Machine Learning provides tools that allow teams to:

- build models collaboratively

- manage datasets centrally

- automate model training

- deploy models through CI/CD pipelines

These capabilities allow organizations to transition from manual experimentation to automated AI development workflows.

Developer productivity is critical because AI innovation cycles are often iterative. Teams must be able to test, refine, and redeploy models rapidly.

Enterprises building such environments often integrate conversational AI and automation capabilities.

6. Accelerate AI Innovation Through Managed Services

Another major advantage of Azure is access to pre-built AI services.

These services allow organizations to deploy AI-powered capabilities without building models entirely from scratch.

Examples include:

- natural language processing APIs

- speech recognition systems

- computer vision models

- generative AI services

Azure OpenAI Service allows organizations to deploy advanced large language models securely within enterprise environments.

Using managed AI services enables organizations to:

- reduce development complexity

- accelerate time-to-value

- lower operational costs

- focus on business innovation

For enterprises pursuing secure scalable AI with Microsoft Azure, these services significantly shorten development cycles while maintaining enterprise-grade security and compliance.

7. Operationalize AI with Enterprise AI Platforms

The final step in AI maturity is operationalizing AI across the enterprise.

Operational AI platforms integrate:

- data pipelines

- machine learning workflows

- deployment pipelines

- monitoring tools

This architecture enables organizations to treat AI systems as production software platforms rather than experimental projects.

Operational AI platforms support:

- continuous model improvement

- automated model deployment

- performance monitoring

- compliance auditing

Organizations that successfully operationalize AI often achieve measurable business benefits such as improved productivity, faster decision-making, and enhanced customer experiences.

For CTOs, data platform leaders, and analytics architects, understanding the realities of migrating from Azure Data and AI Stack to Microsoft Fabric is essential to unlocking the next generation of unified enterprise analytics.

Business Benefits of Secure Scalable AI with Microsoft Azure

Enterprises deploying AI on Azure often realize benefits across multiple dimensions.

These benefits extend beyond technical performance to include strategic business outcomes.

Improved Operational Efficiency

Cloud-based AI infrastructure frees IT teams from maintaining complex hardware environments.

Instead, teams can focus on:

- developing AI use cases

- optimizing data pipelines

- building intelligent applications

This shift enables organizations to allocate resources toward innovation rather than infrastructure management.

Faster Time to Market

Azure’s integrated AI services significantly accelerate development cycles.

Organizations can deploy AI solutions faster because they can leverage:

- pre-built AI models

- managed infrastructure

- integrated development tools

This enables businesses to bring AI-powered products and services to market more quickly.

Stronger Security and Compliance

Azure’s built-in compliance frameworks help organizations meet global regulatory standards.

Enterprises benefit from certifications supporting:

- healthcare regulations

- financial compliance

- global data protection laws

These capabilities reduce the risk associated with enterprise AI deployments.

Enhanced AI Model Performance

Unified data platforms and scalable compute infrastructure improve model performance.

Organizations can train models using larger datasets and deploy them globally with low latency.

This leads to more accurate predictions, better insights, and improved business outcomes.

How Techment Helps Enterprises Build Secure Scalable AI with Microsoft Azure

Building enterprise AI platforms requires expertise across cloud architecture, data engineering, governance, and machine learning.

Techment partners with enterprises to design and implement secure scalable AI with Microsoft Azure, enabling organizations to accelerate their AI journeys with confidence.

Techment supports enterprises across the full AI lifecycle.

AI and Data Strategy Development

Techment works with leadership teams to define AI roadmaps aligned with business objectives.

This includes:

- identifying high-value AI use cases

- assessing data readiness

- designing AI operating models

Modern Data Platform Implementation

Techment helps organizations build unified data platforms using technologies such as:

- Microsoft Fabric

- Azure Synapse Analytics

- Azure Databricks

These platforms enable enterprises to create scalable data ecosystems powering AI innovation.

Enterprise AI Architecture Design

Techment architects scalable AI environments that integrate:

- Azure AI services

- machine learning pipelines

- governance frameworks

- secure cloud infrastructure

This ensures organizations can deploy AI applications reliably across their operations.

Governance, Security, and Compliance

AI governance is essential for long-term success.

Techment helps enterprises implement governance frameworks addressing:

- responsible AI practices

- data protection policies

- regulatory compliance requirements

These frameworks ensure AI systems operate securely and ethically.

For further guidance, see Fabric AI Readiness: How to Prepare Your Data for Scalable AI Adoption .

Conclusion

Artificial intelligence is rapidly becoming a foundational capability for modern enterprises. However, scaling AI across complex organizations requires more than algorithms and models.

It requires infrastructure, governance, and data platforms capable of supporting AI workloads at enterprise scale.

Organizations adopting secure scalable AI with Microsoft Azure gain access to a powerful ecosystem combining cloud infrastructure, advanced AI services, unified data platforms, and enterprise-grade security.

These capabilities enable enterprises to move beyond isolated AI experiments and build production-ready AI platforms that deliver measurable business value.

As AI continues to evolve, organizations that establish strong foundations today will be best positioned to unlock the full potential of generative AI and intelligent automation.

With the right architecture, governance frameworks, and strategic partnerships, enterprises can transform AI from an experimental technology into a core driver of innovation, efficiency, and competitive advantage.

Techment helps organizations design and implement these AI platforms—ensuring enterprises can deploy AI securely, scale innovation rapidly, and achieve long-term business impact.

Frequently Asked Questions

1. Why do enterprises deploy AI on Microsoft Azure?

Azure provides scalable cloud infrastructure, integrated security frameworks, and advanced AI services. These capabilities enable organizations to deploy secure scalable AI with Microsoft Azure while maintaining governance and compliance.

2. Can generative AI run securely in the cloud?

Yes. Cloud platforms like Azure provide enterprise-grade security capabilities including identity management, encryption, threat monitoring, and compliance frameworks

3. How long does it take to deploy enterprise AI platforms?

Timelines vary depending on data readiness and infrastructure maturity. Many organizations begin deploying production AI workloads within 6–12 months after establishing foundational data and governance frameworks.

4. What skills are required to build enterprise AI platforms?

Successful AI initiatives require expertise across:

data engineering

machine learning

cloud architecture

governance and security

Many enterprises partner with technology specialists to accelerate adoption.

5. How important is data quality for AI success?

Data quality is one of the most critical factors in AI performance. Poor-quality data leads to inaccurate models and unreliable predictions.