Introduction

Enterprise AI adoption is accelerating, but one fundamental challenge persists: large language models lack reliable access to enterprise data. This gap leads to hallucinations, outdated responses, and compliance risks—issues that no CTO or data leader can ignore.

This is where RAG tools (Retrieval-Augmented Generation tools) have become mission-critical. Instead of relying solely on pre-trained knowledge, RAG tools connect LLMs with real-time or proprietary data sources, dramatically improving accuracy, trust, and relevance.

According to industry benchmarks from McKinsey and Gartner, enterprises deploying retrieval-augmented architectures see 30–60% improvements in answer accuracy and significant reductions in hallucinations.

In this blog, we provide a comprehensive, enterprise-grade comparison of the top 10 RAG tools in 2026, covering:

- Features and architecture capabilities

- Pricing models and scalability considerations

- Real-world enterprise use cases

- Strategic trade-offs for CTOs and architects

We also break down how to choose the right tool for your organization—and how to operationalize RAG at scale.

For a deeper understanding of enterprise data foundations, explore: Enterprise AI strategy 2026

TL;DR Summary

- RAG tools are essential for reducing hallucinations and improving LLM accuracy in enterprise AI

- The top RAG tools include Meilisearch, LangChain, Pinecone, Vespa, and LlamaIndex

- Open-source tools dominate early-stage innovation, while managed platforms enable enterprise scale

- Choosing the right RAG tool depends on retrieval method, scalability, and integration flexibility

- Enterprises must prioritize governance, latency, and data security when deploying RAG systems

Why RAG Tools Are Critical for Enterprise AI in 2026

The Shift from Generative AI to Grounded AI

Generative AI alone is no longer sufficient. Enterprises now demand grounded AI systems—systems that can:

- Retrieve trusted internal data

- Provide explainable responses

- Maintain compliance and governance

RAG tools enable this shift by acting as the bridge between data platforms and LLMs.

From an enterprise architecture standpoint, RAG introduces a new layer:

- Data ingestion and indexing

- Vector and hybrid retrieval

- Context injection into LLM prompts

This architecture transforms AI from experimental to operational.

For a deeper dive into RAG models and enterprise patterns: RAG Models enterprise guide

Business Impact of RAG Tools

Improved decision intelligence

Executives gain access to accurate, contextual insights derived from internal data.

Reduced operational risk

RAG minimizes hallucinations, which is critical in regulated industries like finance and healthcare.

Faster time-to-value

RAG tools accelerate deployment of AI assistants, copilots, and knowledge systems.

Enterprise Implications

RAG is not just a tooling decision—it is a strategic data architecture decision.

Organizations must rethink:

- Data quality and governance

- Metadata management

- Retrieval latency and scalability

For more insights on foundational AI architectures, refer to: RAG architectures Enterprise Use Cases in 2026.

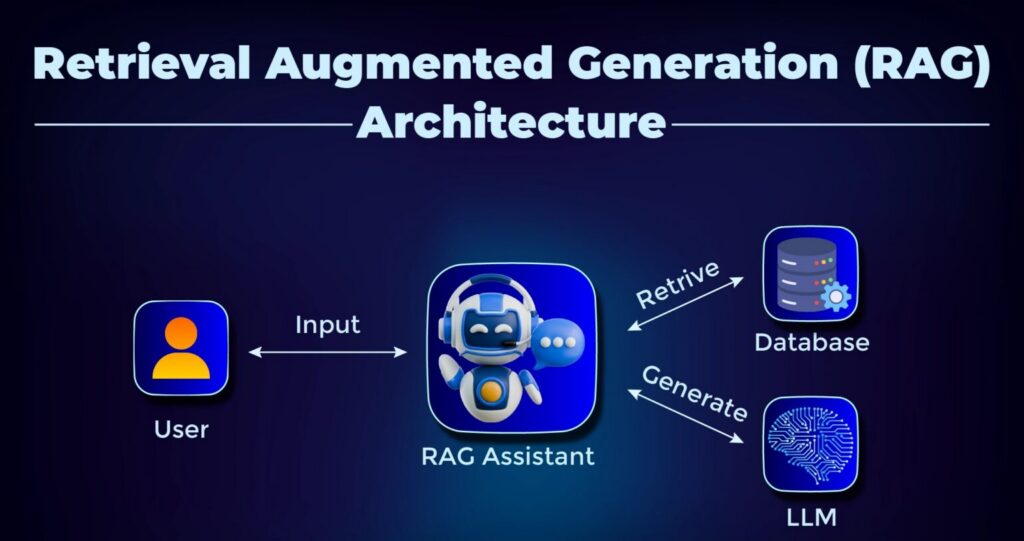

What Are RAG Tools? Architecture and Core Components

Understanding Retrieval-Augmented Generation

RAG tools enhance LLM outputs by injecting relevant external context during inference.

A typical RAG pipeline includes:

- LLM response generation

- Data ingestion and chunking

- Embedding generation

- Vector storage

- Retrieval (semantic, keyword, hybrid)

- Prompt augmentation

Key Capabilities of Modern RAG Tools

Hybrid search (dense + sparse)

Combines vector embeddings with keyword search for better relevance.

Low-latency retrieval

Critical for real-time applications like chatbots and copilots.

Metadata filtering

Enables contextual narrowing of search results.

Scalable indexing

Supports millions to billions of documents.

Why Architecture Matters

Poorly designed RAG pipelines lead to:

- Latency spikes

- Irrelevant retrieval

- Increased infrastructure costs

The choice of RAG tools directly impacts performance, cost, and scalability.

For more on building scalable data foundations that support AI, explore: Data Quality For AI in 2026 Enterprise Guide

Top 10 RAG Tools Compared – Overview

In this section, we analyze the top 10 RAG tools based on enterprise adoption, capabilities, and architectural flexibility.

1. Meilisearch – High-Speed Hybrid Search for RAG Pipelines

Overview

Meilisearch is a developer-first search engine optimized for speed and simplicity, making it highly effective for RAG pipelines requiring low-latency retrieval.

Key Features

- Hybrid search (BM25 + vector search)

- Typo-tolerant indexing

- Custom ranking rules

- Multilingual support

Pricing

- Build: $30/month

- Pro: $300/month

- Enterprise: Custom

- Open-source: Free

Enterprise Use Cases

- AI-powered internal search

- Knowledge assistants

- Product discovery systems

Strategic Insight

Meilisearch is ideal for teams prioritizing developer velocity and fast deployment. However, enterprise governance features are still evolving.

Explore the architectural, operational, and strategic differences between Multi-Agent Systems vs Single-Agent Architectures, helping you make informed decisions aligned with scalability, governance, and AI maturity.

2. LangChain – The Orchestration Layer for RAG Systems

Overview

LangChain is not just a tool—it is a framework for building end-to-end RAG pipelines, including agents, workflows, and integrations.

Key Features

- Chains and agents for workflow orchestration

- Prompt templates and memory

- Extensive integrations with LLMs and vector DBs

Pricing

- Free tier available

- Paid plans from $39/month

- Enterprise pricing available

Enterprise Use Cases

- AI agents and copilots

- Multi-step reasoning systems

- Document Q&A platforms

Strategic Insight

LangChain is essential for complex, multi-step RAG architectures, but requires strong engineering maturity.

Learn more:

https://www.techment.com/blogs/best-practices-for-generative-ai-implementation-in-business/

3. RAGatouille – Precision Retrieval with ColBERT

Overview

RAGatouille introduces token-level retrieval precision, making it highly effective for domain-specific applications.

Key Features

- ColBERT-based late interaction retrieval

- Training pipelines for custom indexing

- Reranking capabilities

Pricing

- Fully open-source (free)

Enterprise Use Cases

- Legal and research applications

- High-accuracy document retrieval

- Scientific knowledge systems

Strategic Insight

Best suited for accuracy-critical workloads, but requires computational resources and ML expertise.

4. Verba – Simplified RAG for Rapid Prototyping

Overview

Verba provides a UI-driven approach to RAG, enabling even non-technical users to build document-based chat systems.

Key Features

- Web-based chat interface

- Hybrid search with semantic caching

- Flexible chunking strategies

Pricing

- Open-source (free)

Enterprise Use Cases

- Internal knowledge bots

- Training and education systems

- Rapid prototyping

Strategic Insight

Great for early-stage experimentation, but not suitable for enterprise-scale deployments.

5. Haystack – Production-Grade RAG Framework

Overview

Haystack is designed for enterprise-grade RAG pipelines, offering modular architecture and production readiness.

Key Features

- Modular pipelines

- REST API deployment

- Built-in observability

Pricing

- Open-source

- Enterprise version via deepset

Enterprise Use Cases

- AI-powered search platforms

- Customer support automation

- Enterprise knowledge systems

Strategic Insight

Haystack is ideal for organizations moving from prototype to production, especially those needing observability and control.

To further understand how reliable data drives enterprise outcomes, refer to Designing Scalable Data Architectures for Enterprise Data Platforms

6. Embedchain – Lightweight RAG for Rapid Prototyping

Overview

Embedchain is a minimalistic framework designed to simplify RAG pipeline creation into just a few lines of code. It abstracts ingestion, embedding, and querying into a unified interface, making it highly attractive for fast experimentation.

Key Features

- One-line ingestion for PDFs, websites, and APIs

- Built-in chat interface

- Multi-model embedding support (OpenAI, Hugging Face, Cohere)

Pricing

- Fully open-source (free)

Enterprise Use Cases

- Proof-of-concept AI assistants

- Internal demos and hackathons

- Lightweight document chatbots

Strategic Insight

Embedchain excels in speed and simplicity, but lacks the customization and scalability required for enterprise-grade deployments.

Explore why modern data platforms are critical for RAG success, how they reshape enterprise AI architectures, and what leaders must prioritize to build scalable, secure, and high-performance RAG systems.

7. LlamaIndex – Data Framework for Context-Aware RAG

Overview

LlamaIndex is one of the most widely adopted data frameworks for RAG, enabling structured ingestion, indexing, and retrieval across diverse enterprise data sources.

Key Features

- Composable indexing strategies

- Structured and unstructured data ingestion

- Built-in agent and routing capabilities

Pricing

- Free tier

- Starter: $50/month

- Pro: $500/month

- Enterprise: Custom

Enterprise Use Case

- Enterprise knowledge assistants

- AI copilots

- Cross-system data querying

Strategic Insigh

LlamaIndex is ideal for building scalable, flexible RAG pipelines, particularly when integrating multiple data sources.

For more insights on foundational AI architectures, refer to: RAG architectures Enterprise Use Cases in 2026.

8. MongoDB Atlas Vector Search – Unified Data + RAG

Overview

MongoDB integrates vector search directly into its database, enabling RAG pipelines without separate vector infrastructure.

Key Features

- Native vector search (HNSW)

- Combined structured + unstructured querying

- Aggregation pipeline integration

Pricing

- Free tier available

- Usage-based pricing (cluster dependent)

Enterprise Use Cases

- Applications already using MongoDB

- Real-time AI-powered applications

- Operational analytics with AI

Strategic Insight

MongoDB is ideal for reducing architectural complexity, but may lack the advanced optimization of specialized vector databases.

Explore the architectural, operational, and strategic differences between Multi-Agent Systems vs Single-Agent Architectures, helping you make informed decisions aligned with scalability, governance, and AI maturity.

9. Pinecone – Managed Vector Database for Scalable RAG

Overview

Pinecone is a leading cloud-native vector database designed specifically for high-performance similarity search in RAG pipelines.

Key Features

- Serverless scaling

- Hybrid search (dense + sparse)

- Real-time indexing and updates

- Multi-tenant architecture

Pricing

- Starter: Free

- Standard: $50/month minimum

- Enterprise: $500/month+

- Dedicated: Custom

Enterprise Use Cases

- Large-scale AI applications

- Recommendation systems

- Multi-tenant SaaS AI platforms

Strategic Insight

Pinecone offers best-in-class scalability and performance, but introduces cost and vendor lock-in considerations.

To understand how enterprises are aligning AI with business outcomes, refer to Techment’s perspective on 7 Proven Strategies to Build Secure, Scalable AI with Microsoft Azure

10. Vespa – Internet-Scale RAG and Search Platform

Overview

Vespa is a powerful open-source platform built for massive-scale, real-time retrieval and ranking, widely used by companies like Yahoo and Spotify.

Key Features

- Hybrid and multimodal search

- On-node ML inference

- Real-time indexing at scale

- Custom ranking pipelines

Pricing

- Open-source (self-managed)

- Cloud pricing based on compute

Enterprise Use Cases

- Large-scale search platforms

- AI-driven recommendation systems

- Real-time analytics pipelines

Strategic Insight

Vespa is best suited for large enterprises with advanced infrastructure capabilities and high-scale requirements.

Best Open-Source RAG Tool

Open-source RAG tools remain the backbone of innovation, offering flexibility and transparency.

Top Picks

- Meilisearch – Fast hybrid search

- LlamaIndex – Flexible data framework

- Haystack – Production-ready pipelines

Enterprise Perspective

Open-source tools provide:

- Cost efficiency

- Customization

- Control over data and infrastructure

However, they require:

- Strong engineering teams

- Ongoing maintenance

Best RAG Search Engine Tools

Search engines are the core of any RAG pipeline, directly impacting retrieval quality.

Leading Tools

- Meilisearch – Developer-friendly hybrid search

- Pinecone – High-performance vector search

- Vespa – Enterprise-scale retrieval

What Makes a Great RAG Search Engine?

- Low latency (<100 ms)

- Hybrid search capabilities

- Metadata filtering

- Real-time indexing

Strategic Insight

Search quality determines LLM output quality—making this one of the most critical architectural decisions.

Explore data discovery solutions from Techment.

Best RAG Tools for Enterprises

Enterprise-Grade Options

- Meilisearch – Scalable hybrid search

- Vespa – Large-scale deployments

- MongoDB – Unified data + AI

Enterprise Requirements

- Security and compliance (SOC2, GDPR)

- Scalability across millions of documents

- Integration with existing data ecosystems

Strategic Insight

Enterprises must prioritize governance, reliability, and observability over experimentation.

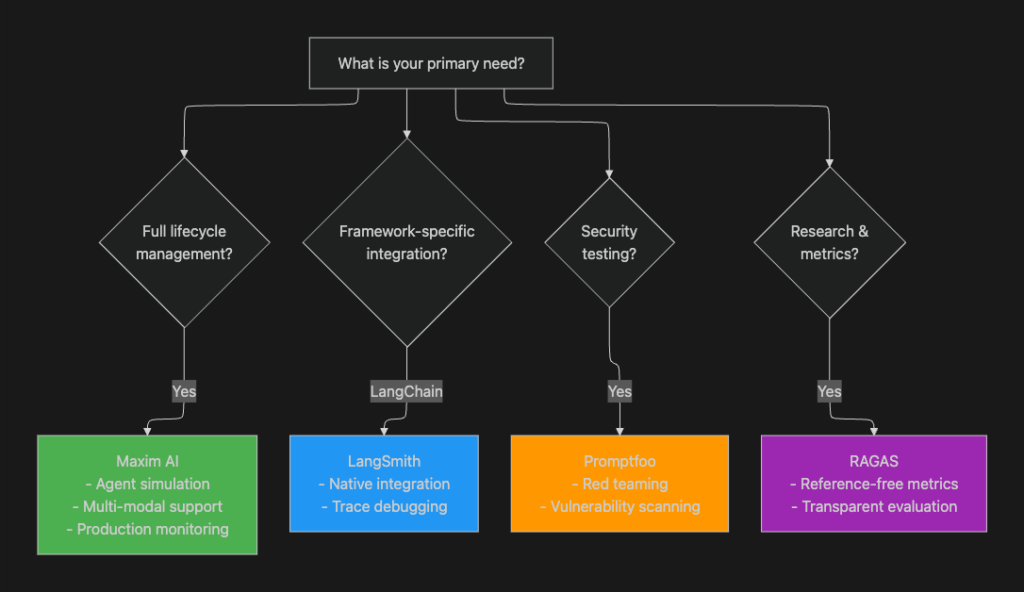

How to Choose the Right RAG Tool (Enterprise Framework)

Choosing among RAG tools requires aligning technical capabilities with business objectives.

1. Retrieval Method

- Keyword (BM25)

- Vector (semantic)

- Hybrid (recommended for enterprises)

2. Performance and Scalability

Evaluate:

- Query latency

- Indexing speed

- Concurrent users

3. Integration Ecosystem

Look for compatibility with:

- LLM providers

- Data platforms

- APIs and SDKs

4. Deployment Model

- Open-source (self-hosted)

- Managed cloud

- Hybrid

5. Cost and ROI

Consider:

- Infrastructure costs

- Licensing

- Operational overhead

6. Governance and Security

- Data access control

- Auditability

- Compliance

Strategic Recommendation

The best RAG tools are those that balance:

- Accuracy

- Scalability

- Cost

- Governance

Selection Criteria For Choosing The Best Fit Among RAG Tools

Explore Techment’s deep dive: 7 Proven Strategies to Build Secure, Scalable AI with Microsoft Azure

How Techment Helps Enterprises Build Scalable RAG Architectures

At Techment, we help organizations move beyond experimentation to enterprise-grade RAG implementation. According to Gartner, organizations adopting retrieval-augmented architectures are significantly improving AI reliability and reducing hallucination risks, reinforcing the importance of RAG tools in enterprise AI strategies.

Our Approach

Data foundation first

We ensure your data is AI-ready through governance, quality, and transformation.

Architecture design

We design scalable RAG pipelines aligned with your enterprise ecosystem.

Platform integration

We integrate RAG tools with platforms like Microsoft Fabric, Azure AI, and modern data stacks.

AI readiness and governance

We ensure compliance, security, and observability across AI systems.

Key Capabilities

- Data modernization and transformation

- Vector search and retrieval optimization

- AI copilots and enterprise assistants

- Governance with tools like Microsoft Purview

Business Impact

- Faster AI deployment

- Improved decision intelligence

- Reduced operational risk

For a deeper architectural perspective:Microsoft Fabric Architecture: CTO’s Guide to Modern Analytics & AI

Conclusion

RAG tools have become the foundation of reliable enterprise AI systems. As organizations scale AI adoption, the ability to connect LLMs with trusted data is no longer optional—it is a strategic necessity.

From lightweight frameworks like Embedchain to enterprise-scale platforms like Vespa and Pinecone, each tool serves a distinct purpose in the RAG ecosystem.

The key is not choosing the “best” tool—but choosing the right tool for your architecture, scale, and business goals.

As AI continues to evolve, organizations that invest in robust RAG architectures today will lead the next wave of enterprise intelligence.

FAQs

1. What are RAG tools?

RAG tools connect LLMs with external data sources to improve accuracy and reduce hallucinations.

2. Which RAG tool is best for enterprises?

Pinecone, Vespa, and MongoDB are strong choices depending on scale and architecture needs.

3. Are open-source RAG tools enough for production?

Yes, but they require engineering maturity and infrastructure management.

4. How long does it take to implement RAG?

Typically 6–16 weeks depending on complexity and data readiness.

5. What skills are required?

Data engineering, ML engineering, and cloud architecture expertise.