For most enterprises, AI ambition is not limited by algorithms or tools—it is constrained by legacy systems. Decades-old platforms tightly coupled architectures, and fragmented data estates make it nearly impossible to operationalize AI at scale. As a result, organizations struggle to move beyond isolated pilots while competitors embed intelligence across core business processes.

To migrate legacy systems to AI-enabled platforms, enterprises must rethink far more than infrastructure. This transformation touches data architectures, application design, governance models, security controls, and operating processes. Done incorrectly, modernization initiatives introduce risk, downtime, and spiraling costs. Done right, they unlock real-time insights, predictive capabilities, and continuous optimization.

This blog on how to Migrate legacy systems to AI-enabled platforms provides a practical, enterprise-grade roadmap for CTOs, CDOs, and data architects navigating legacy system modernization in the AI era. It explains why traditional migration approaches fail, how AI changes modernization priorities, and what steps leaders must follow to move from brittle legacy environments to resilient, AI-ready platforms—without disrupting mission-critical operations.

Read more on why enterprises must adopt a 2025 AI Data Quality Framework spanning acquisition, preprocessing, feature engineering, governance, and continuous monitoring.

TL;DR Summary

- Legacy systems are the biggest blocker to enterprise AI adoption

- AI success depends on modern data, cloud-native platforms, and governance

- Migrate legacy systems to AI-enabled platforms with a phased execution plan, not rip-and-replace

- CTOs must align architecture, operating models, and risk controls before they migrate legacy systems to AI-enabled platforms

- A structured modernizing attempt and plan to migrate legacy systems to AI-enabled platforms roadmap enables scalable, compliant AI transformation

Enhance your analytics outcomes and turn fragmented data with our data engineering solutions and MS Fabric capabilities.

Why Legacy Systems Block AI-Enabled Digital Transformation

Legacy architectures were not designed for AI

Most legacy systems were engineered for transactional consistency, not analytical intelligence. Monolithic applications, batch-based ETL pipelines, and rigid schemas prevent the real-time data access that AI models require. When enterprises attempt AI integration with legacy systems, they often discover that data latency, poor quality, and system dependencies undermine model accuracy and trust.

Research from Gartner consistently shows that poor data readiness—not algorithms—is the leading cause of AI failure in enterprises. Legacy environments amplify this challenge by locking data into proprietary formats and siloed platforms.

From a strategic standpoint, legacy systems create three AI blockers:

- Data immobility: Critical data cannot move fast enough to AI platforms

- Architectural rigidity: Changes require long release cycles

- Operational risk: Any modification threatens core business stability

Without addressing these constraints, attempts to migrate legacy systems to AI-enabled platforms stall early.

Read more on how Microsoft Fabric AI solutions fundamentally transform how enterprises unify data, automate intelligence, and deploy AI at scale in our blog.

The hidden cost of delaying legacy modernization

Many enterprises delay legacy system modernization due to perceived risk or sunk-cost bias. However, maintaining legacy environments while pursuing AI innovation introduces compounding costs. Parallel systems emerge. Manual workarounds proliferate. Shadow IT fills the gaps.

Research estimates that legacy systems consume up to 80% of annual IT budgets globally. This imbalance directly impacts AI programs, which depend on continuous experimentation, scalable compute, and high-quality data pipelines.

For CTOs, the question is no longer whether to modernize—but how fast and how safely they can migrate legacy systems to AI-enabled platforms without destabilizing operations.

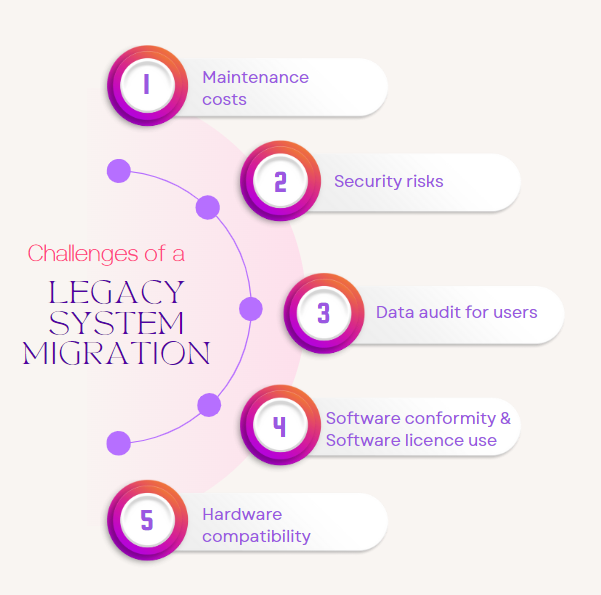

Challenges of Legacy Migration

Migrating legacy systems to modern, AI-enabled platforms comes with several strategic and technical challenges:

1. Complex, Monolithic Architectures

Legacy systems are often tightly coupled and poorly documented, making it difficult to isolate components for migration without disrupting core operations.

2. Data Silos and Poor Data Quality

Historical data may be fragmented across systems, inconsistent in format, or lacking governance standards—complicating integration and analytics readiness.

3. Business Continuity Risks

Downtime, performance degradation, or data loss during migration can directly impact revenue, customer experience, and compliance.

4. Integration Constraints

Older systems may not support modern APIs or cloud-native integrations, requiring custom connectors or middleware solutions.

5. Skills and Change Management Gaps

Teams accustomed to legacy environments may resist new technologies, and organizations often lack in-house expertise in cloud, AI, or modern DevOps practices.

6. Cost and Timeline Uncertainty

Hidden technical debt, undocumented dependencies, and evolving business requirements can inflate budgets and extend project timelines.

Addressing these challenges requires a phased migration strategy, strong governance, and alignment between business and technology stakeholders.

Why “lift-and-shift” fails for AI workloads

Traditional cloud migration strategies often rely on lift-and-shift approaches. While this may reduce infrastructure costs, it does not enable AI-powered legacy migration. Legacy problems simply move to the cloud.

AI workloads require:

- Event-driven architectures

- Decoupled data layers

- Elastic compute and storage

- Built-in governance and observability

Without redesigning the underlying architecture, cloud migration for legacy systems becomes an expensive hosting exercise rather than a transformation.

Enterprises that succeed with AI adopt modernization roadmaps that explicitly prioritize AI readiness—not just cloud adoption.

Learn how our AI modernization solutions help enterprises thrive with intelligent automation, real-time analytics, and governed, integrated AI ecosystems.

Defining AI-Enabled Platforms in the Enterprise Context

What makes a platform “AI-enabled”?

An AI-enabled platform is not defined by embedded machine learning alone. It is an ecosystem designed to continuously generate, govern, and operationalize intelligence across the enterprise.

Core characteristics include:

- Unified data fabric supporting structured and unstructured data

- Real-time ingestion and processing pipelines

- Built-in analytics and AI services

- Enterprise-grade governance, security, and compliance

- Composable architecture enabling rapid innovation

Platforms such as Microsoft Azure, when architected correctly, provide these capabilities—but only if legacy dependencies are systematically addressed.

Read our blog on Microsoft Azure for enterprises has become the foundation of cloud modernization due to its unmatched breadth of services, enterprise-grade governance, global infrastructure, and deep AI integration.

AI-enabled platforms shift modernization priorities

Legacy system modernization historically focused on cost reduction and operational efficiency. AI changes the equation. Modernization now becomes a growth and differentiation strategy.

When enterprises migrate legacy systems to AI-enabled platforms, they unlock:

- Predictive demand and supply optimization

- Intelligent customer engagement

- Autonomous operational decision-making

- Continuous performance optimization

This requires rethinking data migration for AI-ready platforms—not merely moving historical data, but enabling continuous learning and feedback loops.

Platform thinking vs application-by-application upgrades

One common failure pattern is modernizing individual applications in isolation. This creates fragmented AI capabilities and inconsistent governance.

AI-enabled digital transformation strategies require platform thinking:

- Shared data services

- Standardized model deployment pipelines

- Centralized governance and monitoring

- Reusable AI components

This approach reduces risk while accelerating enterprise-wide AI adoption.

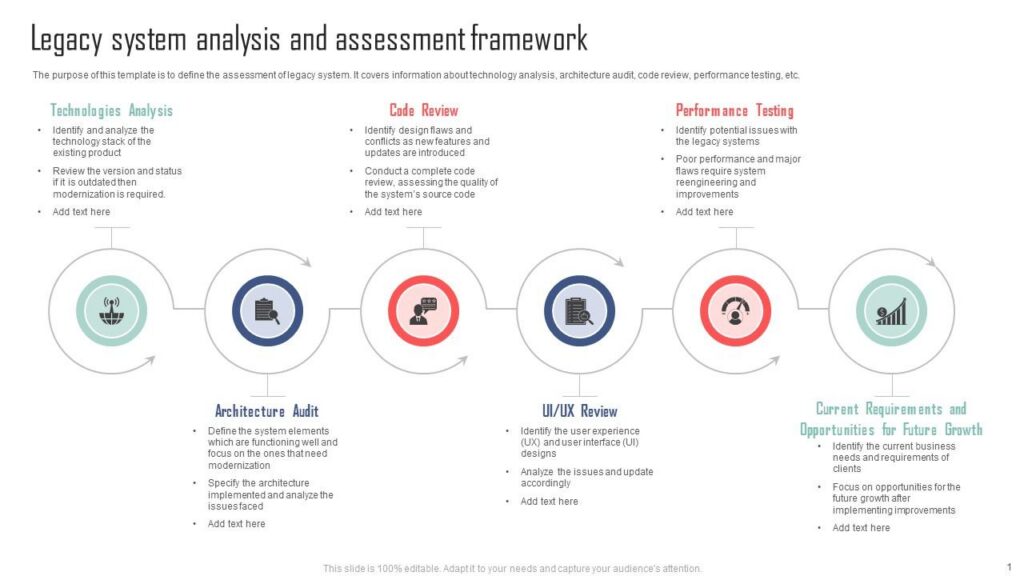

Step 1: Assess Legacy Readiness for AI Modernization

Establish a baseline across systems, data, and risk

Before execution, leaders must understand what they are modernizing. A legacy readiness assessment goes beyond infrastructure inventories.

Key dimensions include:

Application landscape

- Monolith vs modular design

- Dependency complexity

- Release frequency and automation maturity

Data estate

- Data quality, lineage, and ownership

- Real-time vs batch availability

- Sensitivity and regulatory exposure

Operating model

- Skills readiness

- DevOps and MLOps maturity

- Governance enforcement

Enterprises that skip this step underestimate the effort required to migrate legacy systems to AI-enabled platforms.

Identify AI value pools tied to legacy systems

Not every legacy system should be modernized simultaneously. Strategic prioritization is essential.

High-impact candidates typically:

- Sit on high-value operational data

- Influence customer experience or revenue

- Suffer from performance or scalability issues

- Block downstream analytics and AI initiatives

This value-based approach aligns modernization investments with business outcomes rather than technical preferences.

Explore similar frameworks for architecture, implementation, and scaling conversational AI securely and efficiently in our latest blog on Conversational AI on Microsoft Azure: Building Intelligent Enterprise Assistants.

Risk classification drives migration strategy

Legacy systems vary widely in risk tolerance. Core financial platforms demand different approaches than peripheral reporting systems.

Risk classification should account for:

- Regulatory exposure

- Downtime tolerance

- Data sensitivity

- Customer impact

This classification informs whether systems follow re-platforming, refactoring, or incremental strangler-pattern modernization paths.

Step 2: Design a Modernization Roadmap from Legacy to AI

Why phased roadmaps outperform big-bang migrations

Enterprises that attempt wholesale replacements rarely succeed. AI-enabled modernization requires iterative execution.

A practical modernization roadmap from legacy to AI typically follows four phases:

- Stabilize and expose legacy data

- Modernize data and integration layers

- Introduce AI services incrementally

- Decommission legacy components

Each phase delivers standalone value while reducing dependency risk.

Align architecture decisions with AI operating models

Architecture choices must reflect how AI will be developed, deployed, and governed.

Critical decisions include:

- Centralized vs federated data ownership

- Streaming vs batch processing

- Model lifecycle governance

- Human-in-the-loop controls

Legacy architecture transformation to AI fails when these decisions are deferred or fragmented across teams.

Explore how enterprise reliability improves with governance-forward architecture in our data governance solution offerings.

Build AI readiness into every migration wave

Every migration wave should answer one question: Does this make AI easier to deploy, scale, or govern?

Examples include:

- Replacing point-to-point integrations with event hubs

- Introducing metadata-driven data pipelines

- Standardizing identity and access management

- Embedding observability across data flows

This ensures that modernization efforts compound toward AI enablement rather than technical debt reduction alone.

Step 3: Modernize Data Foundations for AI-Ready Platforms

Data modernization is the real AI migration

Enterprises often underestimate the role of data in AI-powered legacy migration. Infrastructure upgrades without data modernization deliver limited value.

AI-ready data platforms require:

- High-quality, well-governed data

- Unified access across domains

- Real-time and historical availability

- Clear lineage and accountability

Legacy data models, designed for transactional integrity, rarely meet these requirements.

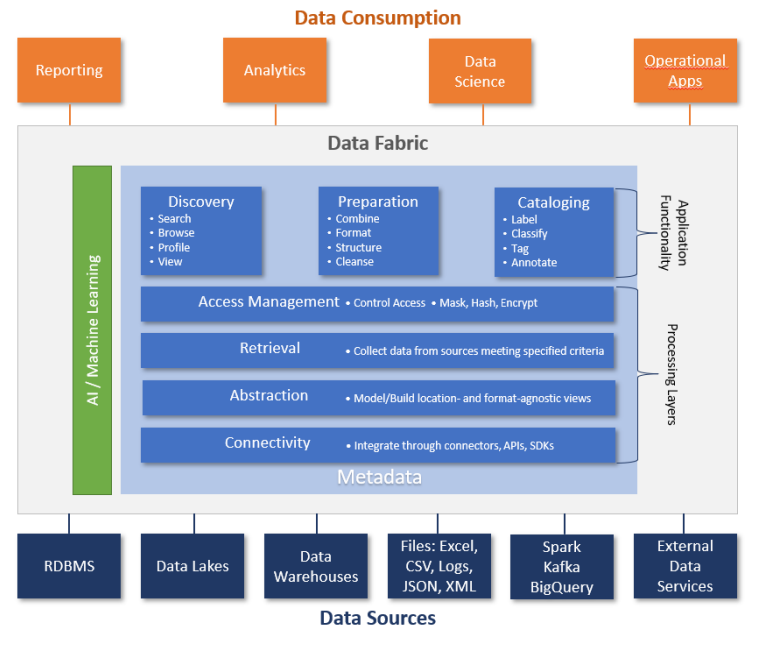

Implement a unified data fabric

A data fabric abstracts data access across legacy and modern systems, enabling AI workloads without full system replacement.

Key capabilities include:

- Logical data virtualization

- Automated metadata management

- Policy-based access control

- Cross-platform query and analytics

This approach accelerates AI integration with legacy systems while reducing migration risk.

Techment’s framework for unified analytics is outlined in Microsoft Data Fabric vs Traditional Data Warehousing.

Data quality and governance cannot be postponed

AI amplifies data issues rather than hiding them. Poor-quality legacy data leads to biased, inaccurate, or non-compliant AI outputs.

Enterprises must embed:

- Automated data quality checks

- Domain ownership models

- Policy enforcement and auditing

- Continuous monitoring

This foundation is essential before scaling AI-enabled platforms.

Step 4: Cloud Migration Strategies for AI-Enabled Legacy Modernization

Why cloud migration is necessary—but not sufficient

Cloud migration for legacy systems is often treated as the end goal. In reality, it is only an enabler. AI workloads demand elastic compute, scalable storage, and managed services—but simply hosting legacy systems in the cloud does not make them AI-ready.

Enterprises that successfully migrate legacy systems to AI-enabled platforms treat cloud migration as a foundational layer rather than the transformation itself. The objective is not infrastructure parity, but architectural optionality—the ability to evolve systems continuously as AI capabilities mature.

This distinction is critical for CTOs. Cloud migration decisions directly influence future AI cost structures, governance models, and innovation velocity.

Choosing the right cloud to migrate legacy systems to AI-enabled platforms

There is no single cloud migration approach suitable for AI-enabled modernization. Instead, enterprises must apply different patterns based on system criticality and AI potential.

Rehost (Lift-and-shift)

Useful only for low-value or short-lived systems. It delivers speed but minimal AI benefit.

Re-platform

Introduces managed databases, containerization, and basic scalability—often a transitional step toward AI readiness.

Refactor

The most AI-aligned approach. Applications are redesigned into microservices or event-driven components that support real-time intelligence.

Replace

Adopted when legacy systems fundamentally block AI adoption and commercial SaaS or modern platforms provide better long-term ROI.

Most organizations use a hybrid portfolio approach, applying different patterns across the application landscape. This reduces risk while steadily increasing AI readiness.

Architecting cloud foundations for AI workloads

AI-enabled platforms require cloud architectures that differ significantly from traditional enterprise hosting environments.

Key architectural principles include:

- Decoupled compute and storage to support model training and inference at scale

- Event-driven integration for real-time AI decisioning

- Native analytics and AI services embedded into the platform

- Policy-driven security and governance across data and models

Cloud-native services enable these capabilities—but only if legacy dependencies are systematically removed or abstracted.

Techment explores this architectural shift in depth in Microsoft Azure for Enterprises: Cloud & AI Modernization.

Step 5: Application Modernization Patterns for AI Integration

Why application architecture determines AI success

AI integration with legacy systems often fails at the application layer. Monolithic applications tightly couple business logic, data access, and user interfaces—making it difficult to embed AI services without destabilizing the system.

Modern AI-enabled platforms rely on composable applications, where intelligence can be injected, replaced, or scaled independently.

This architectural shift is not cosmetic. It determines how quickly enterprises can operationalize AI insights and adapt models over time.

The strangler pattern: Migrate legacy systems to AI-enabled platforms without disruption

One of the most effective patterns for legacy architecture transformation to AI is the strangler pattern. Rather than rewriting systems, enterprises incrementally replace legacy functionality with modern services.

The process typically involves:

- Exposing legacy functionality via APIs

- Redirecting specific workflows to modern microservices

- Gradually retiring legacy components

This pattern allows AI-powered services—such as recommendations, forecasting, or anomaly detection—to be introduced without full system replacement.

For mission-critical environments, this approach significantly reduces operational risk.

Embedding AI services into modernized applications

Once applications are modularized, AI capabilities can be embedded as services rather than features.

Examples include:

- Predictive scoring services

- Natural language interfaces

- Intelligent routing and prioritization engines

- Automated decision-support modules

This service-based model ensures that AI evolves independently of application release cycles, enabling continuous improvement.

MLOps as a first-class architectural concern

AI-powered legacy migration often fails when model lifecycle management is treated as an afterthought. Models degrade, drift, and require constant retraining.

Enterprises must embed MLOps pipelines into their application architecture, covering:

- Model versioning and testing

- Deployment automation

- Monitoring and retraining triggers

- Explainability and auditability

Without MLOps, AI-enabled platforms quickly become operational liabilities rather than strategic assets.

Step 6: Security, Compliance, and Responsible AI by Design

Why AI amplifies enterprise risk

AI-enabled digital transformation strategies introduce new categories of risk. Models influence decisions, automate actions, and process sensitive data—often at scale.

Legacy environments already struggle with governance. Adding AI without modern controls compounds exposure.

Enterprises must address:

- Data privacy and sovereignty

- Model bias and explainability

- Regulatory compliance

- Cybersecurity threats

These considerations must be embedded into modernization roadmaps from the outset.

Zero trust architectures for AI platforms

AI-ready platforms require zero trust security models, where every interaction is authenticated, authorized, and monitored.

Key practices include:

- Fine-grained identity and access management

- Encryption across data at rest and in transit

- Continuous threat detection

- Least-privilege access for AI services

Legacy security models—built around perimeter defenses—are insufficient for distributed AI ecosystems.

Governance frameworks for enterprise AI

Governance is not an obstacle to AI—it is an enabler of scale. Enterprises that formalize governance early deploy AI faster and with greater confidence.

Effective governance frameworks include:

- Clear data ownership and stewardship

- Model approval and review processes

- Ethical AI principles and controls

- Continuous compliance monitoring

Explore how We help enterprises build governance-by-design foundations, know more about our data services here.

Step 7: Managing Change, Skills, and Operating Models

Why technology alone is not enough to migrate legacy systems to AI-enabled platforms

Even the best-designed platforms fail without organizational alignment. Migrating legacy systems to AI-enabled platforms fundamentally changes how teams build, deploy, and operate systems.

CTOs must address:

- Skills gaps in data engineering and AI

- Cultural resistance to automation

- Siloed ownership models

- Legacy KPIs that discourage experimentation

Ignoring these factors slows adoption and erodes ROI.

Building AI-ready operating models

AI-enabled enterprises adopt operating models that emphasize:

- Cross-functional teams

- Product-centric ownership

- Continuous delivery and experimentation

- Data-driven decision-making

This shift requires new incentives, governance structures, and leadership behaviors.

Modernization programs that explicitly address operating model transformation achieve significantly higher AI adoption rates.

Upskilling and partner ecosystems

Few enterprises possess all the skills required for AI-powered legacy migration internally. Strategic partnerships accelerate progress while reducing risk.

Partners bring:

- Proven reference architectures

- Industry-specific best practices

- Change management experience

- Accelerated delivery capabilities

Selecting partners who understand both legacy complexity and AI strategy is essential.

Learn how we can help with intelligent automation, human-like interactions, and scalable business intelligence though our AI-powered solutions.

Common Pitfalls and Trade-Offs in Legacy-to-AI Migration

Over-modernizing low-value systems

Not every system needs AI. Over-investing in low-impact platforms dilutes focus and consumes scarce resources.

Successful enterprises prioritize systems where AI delivers measurable business value.

Underestimating data complexity

Data migration for AI-ready platforms is rarely straightforward. Legacy data often lacks consistency, documentation, and ownership.

Organizations that invest early in data governance and quality outperform peers in AI outcomes.

Treating AI as a feature, not a capability

AI is not a one-time implementation. It is a continuously evolving capability that requires ongoing investment.

Enterprises that budget only for initial deployment struggle to sustain value.

Techment provides a detailed framework in Data Governance for Data Quality : Future-Proofing Enterprise Data.

How Techment Helps Enterprises Migrate Legacy Systems to AI-Enabled Platforms

Techment partners with enterprises to deliver end-to-end legacy-to-AI transformation, combining strategy, architecture, and execution.

Our approach includes:

- AI-first modernization roadmaps aligned to business value

- Legacy system assessments across applications, data, and risk

- Cloud and data platform modernization for AI readiness

- Governance-by-design frameworks for compliance and trust

- MLOps and operating model enablement for sustainable AI

Rather than forcing rip-and-replace, Techment helps organizations progressively migrate legacy systems to AI-enabled platforms, minimizing disruption while maximizing long-term value.

This approach is reflected across our work in Enterprise AI Strategy in 2026 and Fabric AI Readiness: Preparing Data for Scalable AI Adoption.

Conclusion

The path to enterprise AI does not begin with models—it begins with modernization. Legacy systems, once the backbone of enterprise IT, now represent the greatest constraint on AI-driven growth. To remain competitive, organizations must deliberately and systematically migrate legacy systems to AI-enabled platforms.

This journey requires more than technology upgrades. It demands architectural clarity, disciplined execution, strong governance, and organizational alignment. Enterprises that approach modernization as a strategic capability—not a cost-cutting exercise—unlock AI at scale.

As AI continues to reshape industries, the ability to modernize legacy systems safely and intelligently will define the next generation of digital leaders. Techment stands ready to guide that transformation—helping enterprises turn legacy complexity into AI-powered advantage.

Microsoft Data and AI Partner blog explores the strategic value a right Microsoft solutions partner brings to enterprises — and why the organizations leading the AI revolution are doing it with the right partner beside them.

FAQs

How long does it take to migrate legacy systems to AI-enabled platforms?

Most enterprises see meaningful progress within 12–18 months, with continuous evolution beyond that.

Do we need to replace all legacy systems to adopt AI?

No. Incremental modernization and abstraction patterns enable AI without full replacement.

What skills are most critical for AI-enabled modernization?

Data engineering, cloud architecture, MLOps, and governance leadership are essential.

Is cloud mandatory for AI-enabled platforms?

While hybrid models exist, cloud-native capabilities significantly accelerate AI adoption.

How do we manage regulatory risk during AI modernization?

By embedding governance, security, and compliance controls from the start.