Case Study

Transforming Local Government Operations with Private LLM: Secure, Scalable, and Future Ready

Discover how we enabled a pioneering provider of tax and workers’ compensation solutions in the United States to adopt a private LLM hosted in Azure, paving the way for secure and efficient AI-driven operations across local government solutions.

The Challenge

The main challenge was enabling AI-powered services without relying on external GPT services like OpenAI, which posed compliance and data security risks. The client needed to validate whether a private LLM hosted in Azure could deliver the required scalability, security, and performance while handling local government use cases.

Another critical concern was cost-effectiveness. Hosting LLMs on GPUs is resource-intensive, and the client required a clear cost-benefit analysis comparing CPU vs GPU performance to determine infrastructure requirements for future rollouts.

The Solution

Techment collaborated with the client to develop a Proof of Concept (POC) that demonstrated the feasibility of hosting a private Llama 3 (8B) model within a secure Azure environment.

The POC focused on:

- Deploying the model on GPU-enabled Azure VMs.

- Building a Retrieval-Augmented Generation (RAG) chatbot using the client’s domain-specific knowledge base.

- Running controlled tests to capture response quality, speed, and cost.

- Benchmarking the performance of queries on CPUs vs GPUs to guide infrastructure planning.

This initiative laid the foundation for the client’s long-term AI strategy, ensuring private, secure, and scalable LLM integration across their analytics framework and beyond.

Results

The POC delivered tangible outcomes that validated technical feasibility, optimized infrastructure decisions, and ensured future readiness.

- Prototype Deployment: Successful deployment of Llama 3 (8B) model in Azure with private hosting.

- RAG Chatbot Implementation: Developed a chatbot integrated with the client’s knowledge base for contextual Q&A.

- Performance Benchmarks: Detailed comparison of response times between CPU and GPU workloads.

- Blueprint for Scale: Private LLM Deployment Strategy with a security-first approach.

- Agentic AI Framework Direction: Documented roadmap for deploying multiple AI agents to enhance local government workflows.

- Cost-Benefit Analysis: Clear insights into infrastructure costs and scalability requirements.

- Feasibility Validation: Confirmed private LLM adoption as both technically feasible and strategically valuable.

How We Did It?

Techment partnered with Microsoft Azure teams to provision GPU-enabled infrastructure and deploy the Llama 3 (8B) model in a secure environment. The client’s knowledge base was integrated into a RAG pipeline, resulting in a functional prototype chatbot. Our team developed Python-based code to process queries, record response times, and benchmark CPU vs GPU workloads. This approach provided actionable insights into performance trade-offs, scalability considerations, and cost optimization. Additionally, we delivered a deployment blueprint covering hosting strategies, security safeguards, provisioning guides, and agent-based AI integration. The POC was successfully completed in 8 weeks, with a $10,000 execution cost and an estimated $4,200 monthly GPU infrastructure cost for ongoing workloads.

Technical Deep Dive

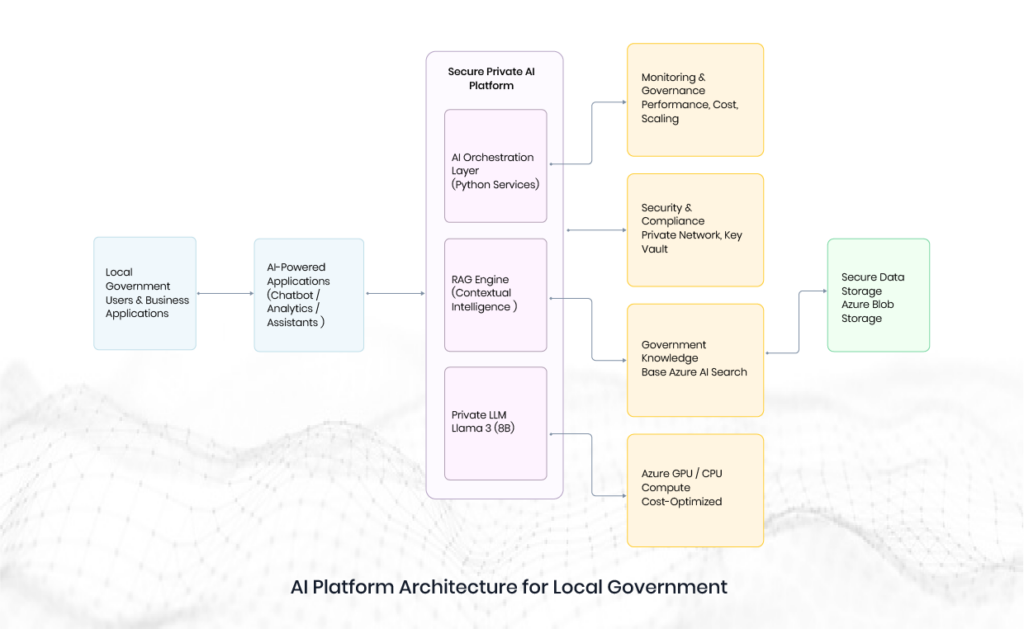

The following diagram represents the logical architecture. It is intentionally simplified for executive and proposal-level discussions.

Tech Stack