Real-time Data is Driving Major Trends in Big Data

The high-volume information, that demands innovative and effective processing for better insight and decision making, is what defines big data. Gartner defines big data with four Vs i.e., volume, velocity, variety, and veracity, all characterized by ‘high’ or ‘huge’ that demands processing with smart technologies, as most of them sit unused in an organization. By using big data technologies, organizations can gain insight and make better decisions that lead to a higher return on investment. But with so many advancements, understanding the prospects of big data technology is critical to assessing which solution is right for an organization.

The variety of data sources available today allows organizations to track trends and provide forecasts on their business by analyzing data. It is these data-driven organizations that are successful in today’s digital world and looking for investments in the data analytics (Is this investment in data analytics worth for businesses in terms of customer experience? Know here) market. The wave of digital assets and processes means more data is being accumulated than ever before, and data analytics is helping in shaping businesses.

According to the Fortune Business Insights’ report, the global big data analytics market size is expected to be worth USD 549.73 billion in 2028 at a CAGR of 13.2% in the 2021-2028 period.

To be successful with big data, organizations need to access data from multiple systems in real-time in an easily digestible format, whether it’s IoT instruments, voice data or unstructured images, structured records, or information stored on devices. Here big data fabric comes into the picture that provides seamless, real-time integration, and access across the multiple data silos of a big data system. We can expect new solutions from companies to emerge, to provide efficient and comprehensive data access for specific industries.

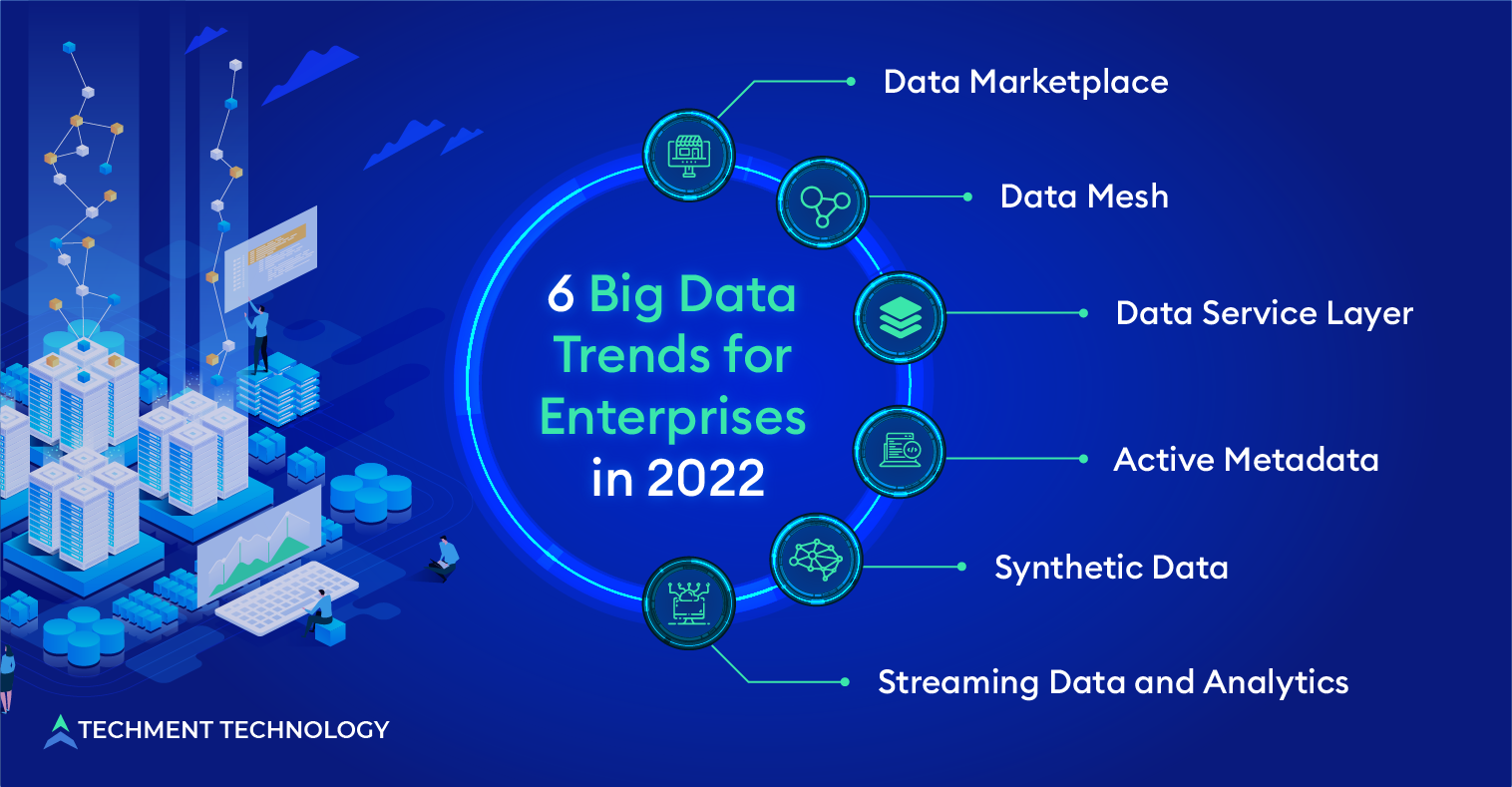

6 Big data Trends for Enterprise to Follow in 2022 and Beyond

As big data analytics grows in popularity with each passing day, it’s critical that businesses are up to date with big data analytics predictions to assess models and market trends. The companies need to be on guard for what the future trends are in big data analytics. As companies’ preferences and needs change with time, there will be an increase in the use and applications of big data analytics by businesses to assess models, and the following big data trends in 2022 will grow:

1. Data Marketplace: The old data sharing models are being replaced by a new data marketplace where users can buy and sell data. The huge volume of data growing in size exponentially also known as big data and its valuation is becoming dearer for enterprises, but at the same time taming them is becoming difficult. Data marketplace is an unparalleled opportunity for monetizing data for like-minded consumers and facilitates its commercialization.

It is a two-sided marketplace where data providers can commercialize their data assets and buyers can meet their data requirements according to needs. The impact of disruptive technologies in data markets will increase rapidly in the near term, as the adoption of technologies such as blockchain. Globally, governments will move toward regulating data markets to protect consumer data from proliferation and misuse.

2. Data Mesh: Conceptually, a data mesh is an architectural approach similar to and supportive of an enterprise data fabric, where the latter is a holistic way to connect all data within an organization, whatever its location, and are accessible on request. A data mesh builds on a distributed architectural approach by including domain-specific information about creating, and storing data so that it is applicable to users in all domains.

Data mesh provides a framework for businesses to democratize both data access and data management by treating data as a product, organized and governed by experts. For the scalability of the data warehouse model, a data mesh approach is worth serious consideration.

3. Data Service Layer: Data service layers are essential for delivering data to end users within and between organizations. A real-time service level enables real-time or near-real-time responses to materialize it for end-users. These service levels following data management constructs:

- Data Lakehouse: The data lakehouse system provides low-cost storage to hold large amounts of data in its raw formats. At the same time, it structures data and improves data management capabilities similar to data warehouses by implementing the metadata layer above the store. This allows multiple teams to use a single system to access all company data for a variety of projects, including data science, machine learning, and business intelligence.

- Super Database: A database that consolidates multiple use cases into one single database is a super database more efficient than being distributed across multiple databases and machines.

4. Active Metadata: Active metadata is enriched after the application of machine learning, human intervention, and process outputs and is the key to deriving the most out of a modern data stack. Various classifications of data are seen in modern data science procedures, metadata is one that informs users about the data itself.

A metadata management strategy is essential to ensure that big data is properly interpreted and can be leveraged to deliver results. Collection, archiving, processing and cleaning. Good data management in big data requires good metadata management. In the future, this would be useful in:

- Formulating digital strategies,

- Monitoring in the purposeful use of data,

- Identifying information sources used in analysis or report.

With evolving technologies like IoT, cloud computing (Successful strategies that would help companies to migrate towards cloud), etc., various types of active metadata would be available that would aid in data governance.

5. Synthetic Data: In 2022, more focus will be on using synthetic data to train machine learning algorithms. Synthetic data sets are those computer-generated simulations that guarantee a wide range of different and anonymous training data. The data is completely anonymous and can be created using various methods, such as general conflicting networks or simulators, which ensure a close resemblance to the genuine data.

By using synthetic data sets, AI developers benefit from better performing and more robust models. Data scientists have found efficient methods to generate high-quality synthetic data which would benefit the companies looking for large amounts of data to train and build AI and machine learning (ML) (Know why it is important for companies to harness AI for their business?)models in the future.

6. Streaming Data and Analytics: Data that streams from different devices in real-time from various IoT devices like sensors, mobile devices, internal transactional systems, etc., provides historical as well as real-time information which can determine issues relating to equipment and prevent future problems. This big data from edge and IoT devices demand analytics on streaming data for monitoring of the data storage and data movements.

This streaming analytics would enable companies to take appropriate action on equipment failure or future-generated problems. In 2022, more focus will be on the combination of IoT and streaming analytics which will provide better responsiveness and agility.

Cloud and Self-service Tools to Power Big Data Accessibility

Seeing and analyzing the applications of big data analytics and the tremendous support it provides to businesses, it is clear that big data is here to stay. With new big data trends, companies can achieve better security, better training, and better business. As big data analytics grows in popularity with each passing day, businesses must be up to date with big data and analytics forecasts and keep track of all the latest trends.

The scene is ever-changing and businesses need to be on guard for what the future trends are in big data analytics. Cloud platforms will be the next big trend for better accessibility of big data and exploration work. Big data analytics will likely be so pervasive in the enterprise that it will no longer be the domain of specialists. Self-service tools will also make big data analytics widely accessible. Businesses that are on the verge of deciding which big data tools to use should study these trends to make more informed decisions as these will have substantial impacts.

Techment Technology has already stepped into data engineering and data management, offering custom big data and analytics services within quick turnaround times. For more information on big data, get our free consultation.

All Posts

All Posts